Security researchers in China have invented a clever way of activating voice recognition systems without speaking a word. By using high frequencies inaudible to humans but which register on electronic microphones, they were able to issue commands to every major “intelligent assistant” that were silent to every listener but the target device.

The team from Zhejiang University calls their technique DolphinAttack (PDF), after the animals’ high-pitched communications. In order to understand how it works, let’s just have a quick physics lesson.

Here comes the science!

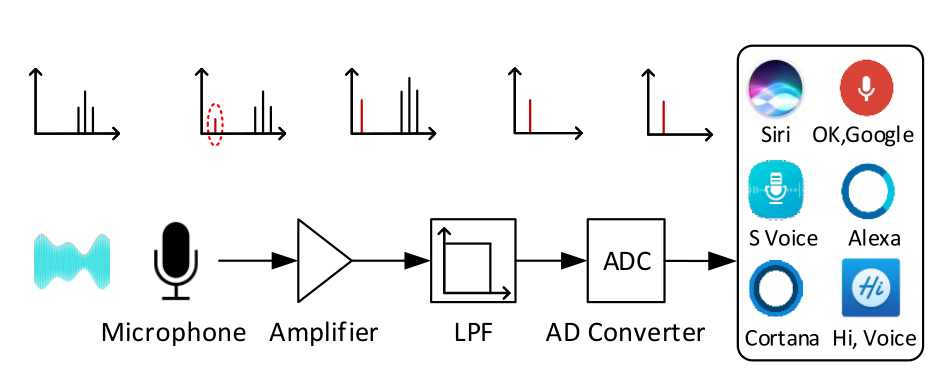

Microphones like those in most electronics use a tiny, thin membrane that vibrates in response to air pressure changes caused by sound waves. Since people generally can’t hear anything above 20 kilohertz, the microphone software generally discards any signal above that frequency, although technically it is still being detected — it’s called a low-pass filter.

A perfect microphone would vibrate at a known frequency at, and only at, certain input frequencies. But in the real world, the membrane is subject to harmonics — for example, a tone at 400 Hz will also elicit a response at 200 Hz and 800 Hz (I’m fudging the math here but this is the general idea. There are some great gifs illustrating this at Wikipedia). This usually isn’t an issue, however, since harmonics are much weaker than the original vibration.

But say you wanted a microphone to register a tone at 100 Hz but for some reason didn’t want to emit that tone. If you generated a tone at 800 Hz that was powerful enough, it would create that 100 Hz tone with its harmonics, only on the microphone. Everyone else would just hear the original 800 Hz tone and would have no idea that the device had registered anything else.

Smooth modulator

That’s basically what the researchers did, although in a much more exact fashion, of course. They determined that yes, in fact, most microphones used in voice-activated devices, from phones to smart watches to home hubs, are subject to this harmonic effect.

Diagram showing how the ultrasound (the black ‘waves’) are generated, creating the harmonic in red, then subtracted by the low-pass filter.

First they tested it by creating a target tone with a much higher ultrasonic frequency. That worked, so they tried recreating snippets of voice with layered tones between 500 and 1,000 Hz — a more complicated process, but not fundamentally different. And there’s not a lot of specialized hardware needed — off the shell stuff at Fry’s or its Chinese equivalent.

The demodulated speech registered just fine, and worked on every major voice recognition platform:

DolphinAttack voice commands, though totally inaudible and therefore imperceptible to humans, can be received by the audio hardware of devices, and correctly understood by speech recognition systems. We validated DolphinAttack on major speech recognition systems, including Siri, Google Now, Samsung S Voice, Huawei HiVoice, Cortana, and Alexa.

They were able to execute a number of commands, from wake phrases (“OK Google”) to multi-word requests (“unlock the back door”). Different phones and phrases had different success rates, naturally, or worked better at different distances. None worked farther than 5 feet away, though.

It’s a scary thought — that invisible commands could be humming through the air and causing your device to execute them (of course, one could say the same of Wi-Fi). But the danger is limited for several reasons.

First, you can defeat DolphinAttack simply by turning off wake phrases. That way you’d have to have already opened the voice recognition interface for the attack to work.

Second, even if you keep the wake phrase on, many devices restrict functions like accessing contacts, apps and websites until you have unlocked them. An attacker could ask about the weather or find nearby Thai places, but it couldn’t send you to a malicious website.

Third, and perhaps most obviously, in its current state the attack has to take place within a couple of feet and against a phone in the open. Even if they could get close enough to issue a command, chances are you’d notice right away if your phone woke up and said, “OK, wiring money to Moscow.”

That said, there are still places where this could be effective. A compromised IoT device with a speaker that can generate ultrasound might be able to speak to a nearby Echo and tell it to unlock a door or turn off an alarm.

This threat may not be particularly realistic, but it illustrates the many avenues by which attackers can attempt to compromise our devices. Getting them out in the open now and devising countermeasures are an essential part of the vetting process for any technology that aspires to being in everyday use.