Perhaps for the first time ever, our noggins have stolen the spotlight from our hips. The media, popular culture, science and business are paying more attention to the brain than ever. Billionaires want to plug into it.

Scientists view neurodegenerative disease as the next frontier after cancer and diabetes, hence increased efforts in understanding the physiology of the brain. Engineers are building computer programs that leverage “deep learning” which mimic our limited understanding of the human brain. Students are flocking to universities to learn how to build artificial intelligence for everything from search to finance to robotics to self-driving cars.

Public markets are putting premiums on companies doing machine learning of any kind. Investors are throwing bricks of cash at startups building or leveraging artificial intelligence in any way.

Silicon Valley’s obsession with the brain has just begun. Futurists and engineers are anticipating artificial intelligence surpassing human intelligence. Facebook is talking about connecting to users through their thoughts. Serial entrepreneurs Bryan Johnson and Elon Musk have set out to augment human intelligence with AI by pouring tens of millions of their own money into Kernel and Neuralink, respectively.

Bryan and Elon have surrounded themselves with top scientists, including Ted Berger and Philip Sabes, to start by cracking the neural code. Traditionally, scientists seeking to control machines through our thoughts have done so by interfacing with the brain directly, which means surgically implanting probes into the brain.

Scientists Miguel Nicolelis and John Donoghue were among the first to control machines directly with thoughts through implanted probes, also known as electrode arrays. Starting decades ago, they implanted arrays of approximately 100 electrodes into living monkeys, which would translate individual neuron activity to the movements of a nearby robotic arm.

The monkeys learned to control their thoughts to achieve desired arm movement; namely, to grab treats. These arrays were later implanted in quadriplegics to enable them to control cursors on screens and robotic arms. The original motivation, and funding, for this research was intended for treating neurological diseases such as epilepsy, Parkinson’s and Alzheimer’s.

Curing brain disease requires an intimate understanding of the brain at the brain-cell level. Controlling machines was a byproduct of the technology being built, in addition to access to patients with severe epilepsy who already have their skulls opened.

Science-fiction writers imagine a future of faster-than-light travel and human batteries, but assume that you need to go through the skull to get to the brain. Today, music is a powerful tool for conveying emotion, expression and catalyzing our imagination. Powerful machine learning tools can predict responses from auditory stimulation, and create rich, Total-Recall-like experiences through our ears.

If the goal is to upgrade the screen and keyboard (human<->machine), and become more telepathic (human<->human), do we really need to know what’s going on with our neurons? Let’s use computer engineering as an analogy: physicists are still discovering elementary particles; but we know how to manipulate charge good enough to add numbers, trigger pixels on a screen, capture images from a camera and capture inputs from a keyboard or touchscreen. Do we really need to understand quarks? I’m sure there are hundreds of analogies where we have pretty good control over things without knowing all the underlying physics, which begs the question: Do we really have to crack the neural code to better interface with our brains?

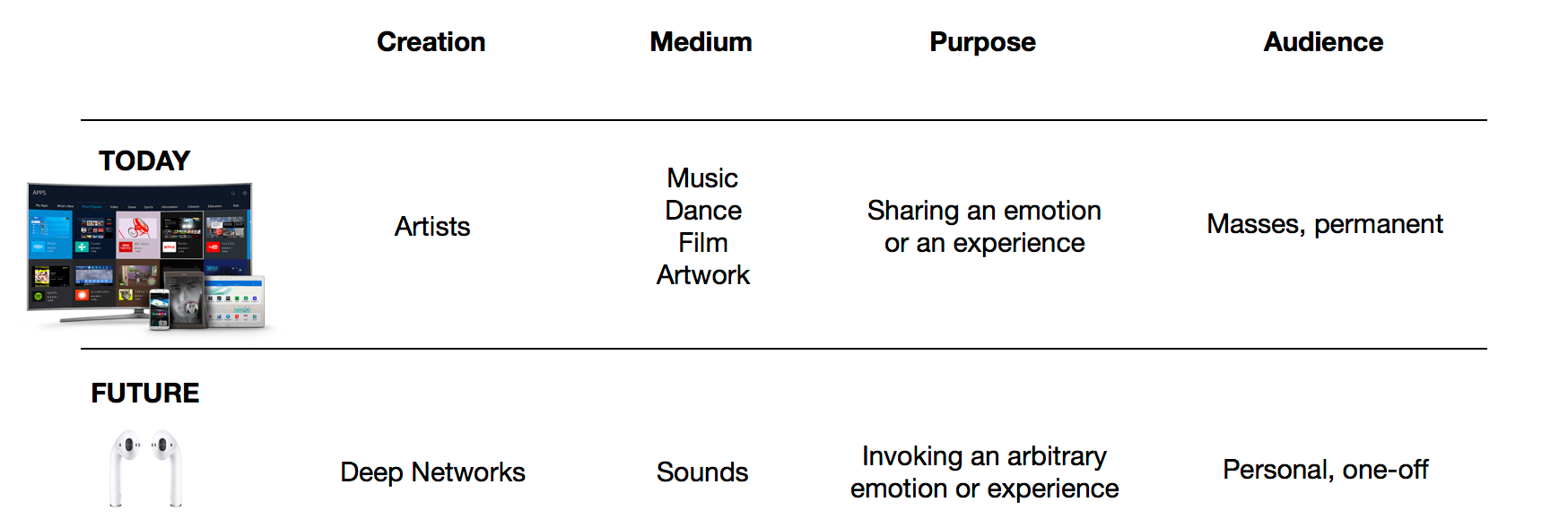

In fact, I would argue that humans have been pseudo-telepathic since the beginning of civilization, and that has been in the art of expression. Artists communicate emotions and experiences by stimulating our senses, which already have a rich, wide-band connection to our brains. Artists stimulate our brains with photographs, paintings, motion pictures, song, dance and stories. The greatest artists have been the most effective at invoking certain emotions; love, fear, anger and lust to name a few. Great authors empower readers to live though experiences by turning the page, creating richer experiences than what’s delivered through IMAX 3D.

Creative media is a powerful tool of expression to invoke emotion and share experiences. It is rich, expensive to produce and distribute, and disseminated through screens and speakers. Although virtual reality promises an even more interactive and immersive experience, I envision a future where AI can be trained to invoke powerful, personal experiences through sound. Images courtesy of Apple and Samsung.

Unfortunately those great artists are few, and heavy investment goes into a production which needs to be amortized over many consumers (1->n). Can content be personalized for EVERY viewer as opposed to a large audience (1->1)? For example, can scenes, sounds and smells be customized for individuals? To go a step further; can individuals, in real time, stimulate each other to communicate emotions, ideas and experiences (1<->1)?

We live our lives constantly absorbing from our senses. We experience many fundamental emotions such as desire, fear, love, anger and laughter early in our lifetimes. Furthermore, our lives are becoming more instrumented by cheap sensors and disseminated through social media. Can a neural net trained on our experiences, and emotional responses to them, be able to create audio stimulation that would invoke a desired sensation? This “sounds” far more practical than encoding that experience in neural code and physically imparting it to our neurons.

Powerful emotions have been inspired by one of the oldest modes of expression: music. Humanity has invented countless instruments to produce many sounds, and, most recently, digitally synthesized sounds that would be difficult or impractical to generate with a physical instrument. We consume music individually through our headphones, and experience energy, calm, melancholy and many other emotions. It is socially acceptable to wear headphones; in fact Apple and Beats by Dre made it fashionable.

If I want to communicate a desired emotion with a counterpart, I can invoke a deep net to generate sounds that, as per a deep net sitting on my partners’ device, invokes the desired emotion. There are a handful of startups using AI to create music with desired features that fit in a certain genre. How about taking it a step further to create a desired emotion on behalf of the listener?

The same concept can be applied for human-to-machine interfaces, and vice-versa. Touch screens have already almost obviated keyboards for consuming content; voice and NLP will eventually replace keyboards for generating content.

Deep nets will infer what we “mean” from what we “say.” We will “read” through a combination and hearing through a headset and “seeing” on a display. Simple experiences can be communicated through sound, and richer experiences through a combination of sight and sound that invoke our other senses; just as how the sight of a stadium invokes the roar of a crowd and the taste of beer and hot dogs.

Behold our future link to each other and our machines: the humble microphone-equipped earbud. Initially, it will tether to our phones for processing, connectivity and charge. Eventually, advanced compute and energy harvesting would obviate the need for phones, and those earpieces will be our bridge to every human being on the planet, and access corpus of human knowledge and expression.

The timing of this prediction may be ironic given Jawbone’s expected shutdown. I believe that there is a magical company to be built around what Jawbone should have been: an AI-powered telepathic interface between people, the present, past and what’s expected to be the future.