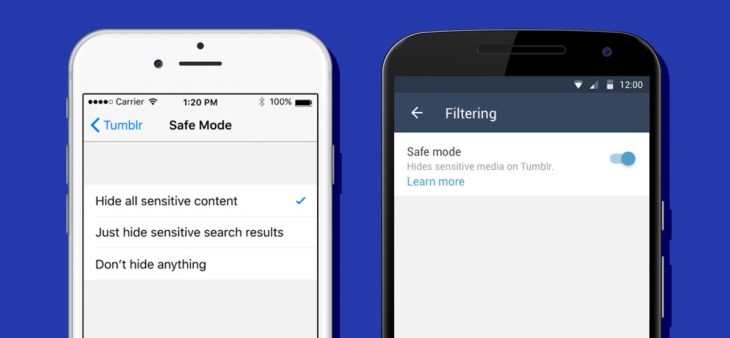

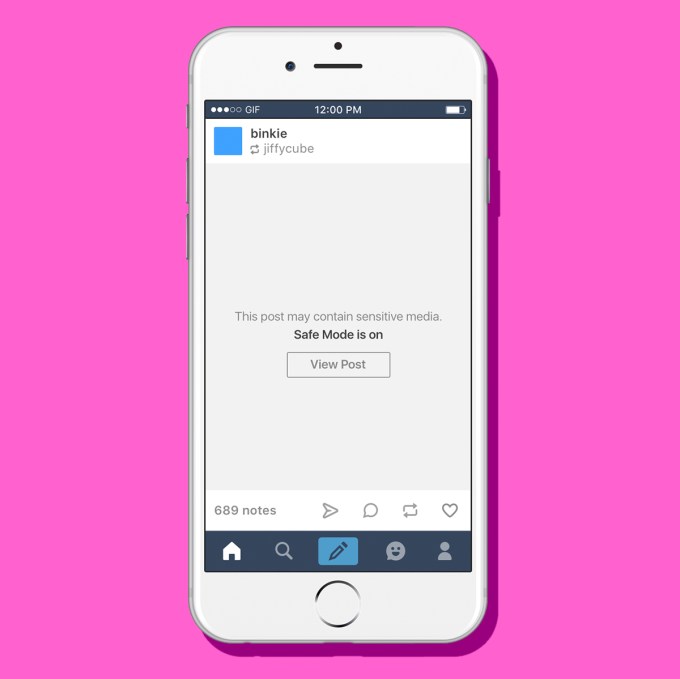

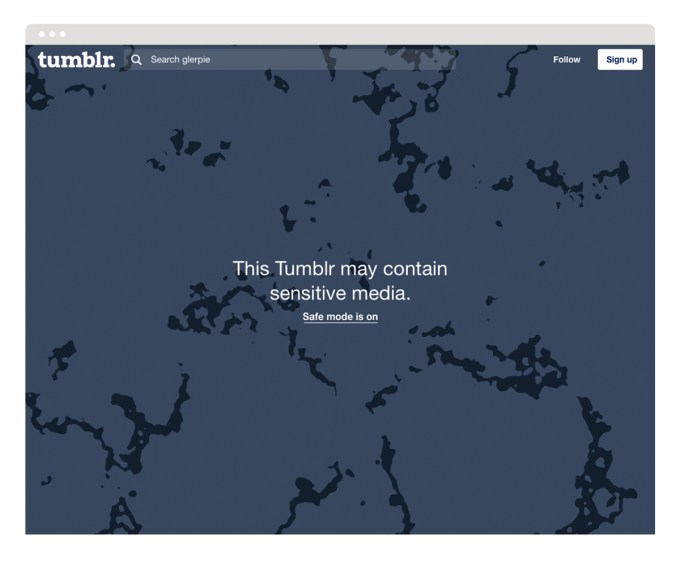

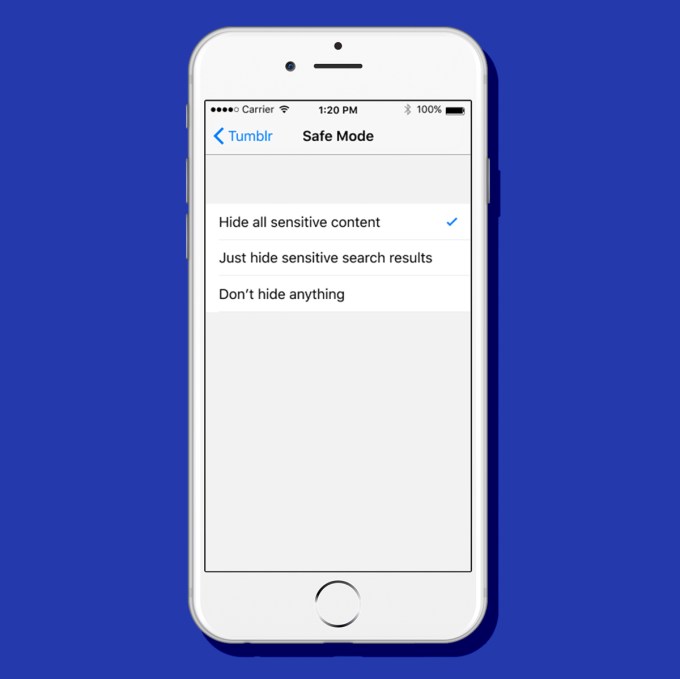

Tumblr this week introduced a “safe mode” for its service, which combines the company’s previously available “safe search” functionality with a new filter that would hide “sensitive” content from the site’s main Dashboard, using an overlay. However, Tumblr quickly came under fire for hiding “sensitive” content that was anything but – instead, noted many users, Tumblr was seemingly filtering out innocent posts, including LGBTQ+ content, for no apparent reason.

This wouldn’t be the first time that LGBTQ+ content got caught up in an algorithmic filter of some kind. YouTube this week also announced it had updated its policies after LGBTQ+ videos had been inadvertently blocked when viewing YouTube in Restricted Mode. (The company had addressed the problem back in April.)

In YouTube’s case, the company claimed an engineering issue with its filter was to blame.

What was going on at Tumblr, however, was less immediately clear.

The company had earlier said that content was being flagged as “sensitive” through a combination of automation and human moderation. It described sensitive content broadly; even artistic nudity, or nudity in an educational or photojournalistic context, may be considered sensitive, it had said.

Given that it was now hiding innocent photos and illustrations – including those that were LGBTQ+-themed but not explicit – questions were raised about whether Tumblr had actually begun to censor its typically more inclusive community.

Plus, people wanted to know, was this Verizon’s fault? Verizon, which also owns TechCrunch parent AOL, had just picked up Tumblr through the Yahoo acquisition. (And it had already been making some changes.)

Similar to the issue with YouTube, Tumblr’s problem of censorship – or filtering gone wrong – disproportionately affected younger users. With YouTube, for example, Restricted Mode is often enabled on school computers or in libraries. And these are sometimes the only places where LGBTQ+ youth can seek out their communities online.

Tumblr’s filtering system, meanwhile, was designed to hide explicit blogs from search results on the web, and was going to be automatically enabled for any logged-out users as well as those under 18, starting on July 5th, 2017.

On Friday, Tumblr addressed the issue of innocuous posts, including LGBTQ+ content, being flagged and hidden. The company explained what had happened, and apologized to its community.

“Please know that was never our intention,” a blog post stated, referring to how LGBTQ+ content had been blocked. “We’re deeply sorry. Tumblr will always be a place where everyone is welcome and protected, so we want to explain what happened.”

The majority of the problem had to do with the fact that many of the blog creators themselves had marked their own blogs as “Explicit.” This is an optional setting bloggers on Tumblr can use to warn the community that their site may host adult material. It also means those blogs will be filtered out from Safe Search results. (This is how the system has worked for years, so that’s not new.)

However, when Tumblr created the new “Safe Mode,” it designed it with the assumption that because these blogs were marked “Explicit,” all their content would be “sensitive,” as well.

“Because we consider Explicit blogs to be predominantly sensitive content, we were automatically marking all their posts as sensitive. That was too broad,” Tumblr explains.

The end result is that entire Tumblr blogs were being marked “sensitive,” even though many of their posts were not.

Tumblr says it has now corrected the problem by classifying each post individually.

“As they should be,” Tumblr added.

A couple of other issues were addressed, too.

Before, when explicit Tumblrs reblogged a “safe” post, it was getting marked as “sensitive” because it was now on an “Explicit” blog. This was even happening to text posts. Tumblr changed the logic here so that if the original poster’s content was safe, all reblogs would also be marked safe.

Additionally, Tumblr says it’s still working to correct a problem with photosets.

The machine algorithms initially classifying the photos posted to Tumblr as safe or sensitive are more challenged by photosets (multi-photo posts.) To be cautious, Tumblr was marking photosets as sensitive, forcing users to request a human review after publication. The company says it’s working now to have photosets analyzed as a group, not as individual images, which should reduce the mistakes the machine analysis makes.

It didn’t give a timeline on when this change would be finalized, nor detail how this would impact its current policies with photosets in Safe Mode.

Tumblr concluded by saying it was committed to making Safe Mode – which is designed to protect users from content they don’t want to see, specifically nudity – work properly. Plus, it noted, its algorithms will improve as users respond to misclassified posts over time.