How should Facebook decide what’s allowed on its social network, and how to balance safety and truth with diverse opinions and cultural norms? Facebook wants your feedback on the toughest issues it’s grappling with, so today it published a list of seven “hard questions” and an email address — hardquestions@fb.com — where you can send feedback and suggestions for more questions it should address.

Facebook’s plan is to publish blog posts examining its logic around each of these questions, starting later today with one about responding to the spread of terrorism online, and how Facebook is attacking the problem.

[Update: Here is Facebook’s first entry in its “Hard Questions” series, which looks at how it counters terrorism. We have more analysis on it below]

“Even when you’re skeptical of our choices, we hope these posts give a better sense of how we approach them — and how seriously we take them” Facebook’s VP of public policy Elliot Schrage writes. “And we believe that by becoming more open and accountable, we should be able to make fewer mistakes, and correct them faster.”

Here’s the list of hard questions with some context from TechCrunch about each:

- How should platforms approach keeping terrorists from spreading propaganda online?

Facebook has worked in the past to shut down Pages and accounts that blatantly spread terrorist rhetoric. But the tougher decisions come in the grey area fringe, and where to draw the line between outspoken discourse and propaganda

- After a person dies, what should happen to their online identity?

Facebook currently makes people’s accounts into memorial pages that can be moderated by a loved one that they designate as their “legacy contact” before they pass away, but it’s messy to give that control to someone, even a family member, if the deceased didn’t make the choice.

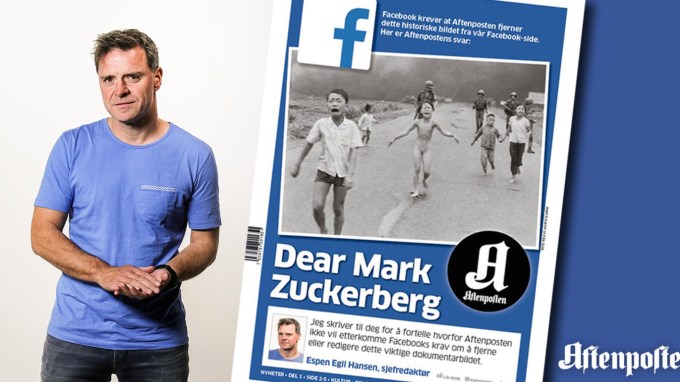

- How aggressively should social media companies monitor and remove controversial posts and images from their platforms? Who gets to decide what’s controversial, especially in a global community with a multitude of cultural norms?

Facebook has to walk a thin line between making its app safe for a wide range of ages as well as advertisers, and avoiding censorship of hotly debated topics. Facebook has recently gotten into hot water over temporarily taking down videos of the aftermath of police violence, and child nudity in a newsworthy historical photo pointing out the horrors of war. Mark Zuckerberg says he wants Facebook to allow people to be able to set the severity of its filter, and use the average regional setting from their community as the default, but that still involves making a lot of tough calls when local norms conflict with global ones.

- Who gets to define what’s false news — and what’s simply controversial political speech?

Facebook has been racked with criticism since the 2016 US presidential election over claims that it didn’t do enough to prevent the spread of fake news, including right-wing conspiracy theories and exaggerations that may have given Donald Trump an advantage. If Facebook becomes the truth police and makes polarizing decisions, it could alienate the conservative side of its user base and further fracture online communities, but if it stands idle, it may grossly interfere with the need for an informed electorate.

- Is social media good for democracy?

On a similar front, Facebook is dealing with how peer-to-peer distribution of “news” omits the professional editors who typically protect readers from inaccuracy and misinformation. That problem is exacerbated when sensationalist or deceitful content is often the most engaging, and that’s what the News Feed that highlights. Facebook has changed its algorithm to downrank fake news and works with outside fact checkers, but more subtle filter bubbles threaten to isolate us from opposing perspectives.

- How can we use data for everyone’s benefit, without undermining people’s trust?

Facebook is a data mining machine, for better or worse. This data powers helpful personalization of content, but also enables highly targeted advertising, and gives Facebook massive influence over a wide range of industries as well as our privacy.

- How should young internet users be introduced to new ways to express themselves in a safe environment?

What’s important news or lighthearted entertainment for adults can be shocking or disturbing for kids. Meanwhile, Facebook must balance giving younger users the ability to connect with each other and form support networks with keeping them safe from predators. Facebook has restricted the ability of adults to search for kids and offers many resources for parents, but does allow minors to post publicly which could expose them to interactions with strangers.

Countering Terrorism

Facebook’s post about how it deals with terrorism is much less of a conversation starter, and instead mainly lists ways its combatting their propaganda with AI, human staff, and partnerships.

Image via BuzzNigeria

- Image matching to prevent repeat uploads of banned terrorism content

- Language understanding via algorithms that lets Facebook identify text that supports terrorism and hunt down similar text.

- Removing terrorist clusters by looking for accounts connected to or similar to those removed for terrorism

- Detecting and blocking terrorist recidivism by identifying patterns indicating someone is re-signing up after being removed

- Cross-platform collaboration allows Facebook to take action against terrorists on Instagram and WhatsApp too

- Facebook employs thousands of moderators to review flagged content including emergency specialists for handling law enforcement requests, is hiring 3,000 more moderators, and staffs over 150 experts solely focused on countering terrorism

- Facebook partners with other tech companies like Twitter and YouTube to share fingerprints of terrorist content, it receives briefings for government agencies around the world, and supports programs for counterspeech and anti-extremism

Unfortunately, the post doesn’t even include the feedback email address, nor does it pose any philosophical questions about where to draw the line when reviewing propaganda.

[Update: After I pointed out the hardquestions@fb.com was missing from the counter-terrorism post, Facebook added it and tells me it will be included on all future Hard Questions posts. That’s a good sign that it’s already willing to listen and act on feedback.]

Transparency Doesn’t Alleviate Urgency

The subtext behind the hard questions is that Facebook has to figure out how to exist as “not a traditional media company” as Zuckerberg referred to it. The social network is simultaneously an open technology platform that’s just a skeleton fleshed out by what users volunteer, but also an editorialized publisher that makes value judgements about what’s informative or entertaining, and what’s misleading or distracting.

It’s wise of Facebook to pose these questions publicly rather than letting them fester in the dark. Perhaps the transparency will give people the peace of mind that Facebook is at least thinking hard about these issues. The question is whether this transparency gives Facebook leeway to act cautiously when the problems are urgent yet it’s earning billions in profit per quarter. It’s not enough just to crowdsource feedback and solutions. Facebook must enact them even if they hamper its business.