Enrico Fermi tore a large sheet of paper into small pieces and dropped them. A few seconds later, the pieces were blown a short distance in midair and landed some eight feet away.

Fermi paced the distance, then consulted a chart he prepared earlier. Based on the corresponding data, he told the people around him that the shockwave that hit the air to blast the papers out was from a force of roughly about 10 kilotons of TNT exploding.

On that cool morning in July 1945, Fermi and his colleagues tested the very first atomic bomb. The blast magnitude was later calculated to be 20 kilotons. That won Fermi the betting pool started by senior physicists in the project.

Once again, Fermi was correct in his guess using such a mundane test for such a complex problem. “The Pope of Physics”, his colleagues called him, for they said he was infallible, like the Pope.

No matter what the problem, not only he solved it, but he made it look effortless. But Fermi possessed no superhuman powers. He studied the fundamentals of physics and mathematics from a very young age and mastered them. Only after that, he could solve these problems with such ease.

Over the next several decades, the study of complexity progressed and extended into numerous problem domains and industries. Nobel prizes were awarded to the frontier academic leaders studying the space, starting with Herbert Simon in his groundbreaking work called “bounded rationality”.

Simon simply stated that humans are “bounded” by either the limited information they receive or the limited capacity to process vast information, therefore end up “satisficing” their decisions, choosing the best alternative on what they later identify as “gut feeling.”

Gut-feeling was all Arthur Rock and Tom Perkins had in the early days of venture investing when capital was scarce, deal flow was small, and historical data was non-existent. This scarcity led to meticulous efforts in as they discovered and funded the leading companies of the future. As venture firms building on the examples of these legends, they’ve maintained the heuristic driven strategy.

Over the next 50 years, the number of deals to choose from kept increasing exponentially and the decision process became highly prone to biases. Decision biases eventually chipped away from superior fund-level returns once delivered by the Rock’s and the Perkins’s.

Perhaps the venture capital industry became too complacent in its decades old strategies as top firms faced no shortage of capital flowing into their consecutive funds. Still, heuristics stayed as the de-facto strategy mostly because venture capital is inherently complex.

Samuel Abresman describes complexity thoroughly in his book Overcomplicated.

In a complex system, he explains:

“The parts themselves need to be connected and interacting together in a tumultuous dance. When this happens, you see certain characteristic behaviors, hallmarks of a complex system: small changes cascade through this network, feedback occurs in the complex system, and there is even a sensitive dependence on the initial state of this system. These properties, among others, take a system from complication to complexity.”

Peter Tetlock and Dan Gardner explain methods of attacking such complex problems in their remarkable book calledSuperforecasting: The Art and Science of Prediction. Not surprisingly, we find Enrico Fermi at the core of this book.

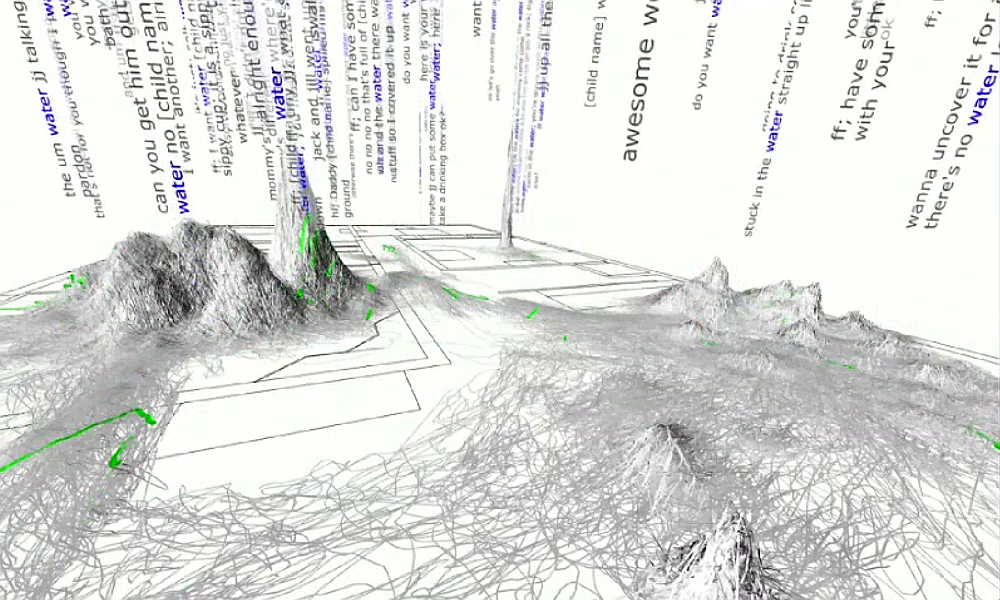

“They Fermi-ize the problems” they say in describing how a group of average people solve complex problems such as the risks of another lethal outbreak of bird flu in China or the likelihood of Aleppo falling to the Free Syrian Army in 2013.

The way to Fermi-ize a problem is to first calculate the base-rate or build an “outside view”, a term coined by no other than another Nobel laureate Daniel Kahneman. Then one turns into building an “inside view” which is all about understanding all the specifics of the particular case.

These two views are then combined by various decision models adjusting the original estimate up or down, and often with confidence intervals and factors of safety.

Today, there is more data about startups than ever. We recognize key attributes of what drives a young company to success or failure. However, systematizing this data set and building a toolbox of models for the venture capital industry still possess academic level challenges because in venture, there are many moving pieces that interact with one another. One proprietary research group discovered that if the founding team had started a company before and sold it, they are about 14% less likely to fail.

If they’ve started a company but have not exited, that number is 8% but still better than someone who has never started a company before. A 3-founder team we learn is 8% less likely to fail in comparison to a single-founder company and the ratio drops again for teams of 4 or more cofounders. From team to product, markets to revenue and margins, product to traction, every attribute carries a different level of importance. Converting qualitative to quantitative is a challenge, but not impossible.

Having said all this, one must make the clarification that decision models do not create a “crystal ball” or a “magic black box” where deals come in one end and Uber comes out the other. It’s about having a structured, calculated process around how to select favorable opportunities and calculating optimal check sizes rather than chasing “hot deals.” At the end, this strategy does not work against the conventional wisdom, on the contrary, it “reveals” new perspective and enhances intuition.

The second problem in VC is how to calculate check sizes. The solution to that problem also defines the number of companies an optimal portfolio – a commonly debated topic. Traditional firms adopted more heuristic measures in tackling this problem. The most popular view is also a perfect example of “optimism bias” and states “one home run will make up for all the losses”. Other heuristics include, investing in a high number of seed stage companies and reupping on the ones that don’t fail.

At the fund level, investing half of the fund capital the first year, the other half over the following two years is quite common. The right place to start “Fermi-izing” this problem, would be to first build the “outside view” which means understanding the return distributions, then adjusting that view by studying the intricacies of all attributes that contribute to the success or failure of companies.

Research indicates that for early stage companies, there’s a 48% full failure risk, 25% partial failure, and a long tail of returns are seen in the remainder. No curve is perfect in real life, so sweet spots emerge, for instance, the 3x to 7x zone occurs about 5% of the time. If one only manages to “land” in this sweet spot of returns for all their portfolio companies even without having a single unicorn, they will have beaten the 99% of all VC firms out there.

The way to achieve that is to avoid early losses which is counterintuitive to traditional VC strategy which is to make bolder bets early on, in the off-chance of early home-runs, raise the next fund. If the earlier bets don’t pay off, and they often don’t, the only choice left is to swing for the fences.

Would a quant strategy replace “gut-feeling” and become the norm for venture industry in the foreseeable future? In addition, would it help fix the ethical issues in the industry such as alleged investor and founder fraud which threatens the very existence of the hottest asset class in the world? Once “gut-feeling” is put aside, the focus automatically shifts to numbers and performance. And numbers certainly don’t lie. The closer you look at the numbers, the more you see.

Tetlock concludes his book as follows:

“This sounds like detective work because it is— or to be precise, it is detective work as real investigators do it, not the detectives on TV shows. It’s methodical, slow, and demanding. But it works far better than wandering aimlessly in a forest of information.”

Today, the significance of making informed, data-driven decisions is irrefutable. And since the entire tech industry is built on math and science, why not apply the same to investing, governance and beyond?

Bonus:

Consider an oversimplified scenario in which the outcome is either failure or success with the following outcomes. When the company fails, it happens 60% of the time and you lose all the money, but when it succeeds 40% of the time, you get 10x your money. Would you invest, and if yes, how much would you bet vis-à-vis the size of your fund to maximize the wealth for the fund?

It’s important here to note that the objective is wealth maximization at the fund level, not at a single company level. There’s a major distinction in this case because while a single home-run can be glorified the fund level impact can be insignificant due to the size of the bet, another common scenario we see in traditional venture.

Let’s turn to Kelly for the answer. The Kelly Criterion as it’s often known is a mathematical formula for working out the optimum amount of money to stake on a bet to maximize the growth of your funds.

The formula for Kelly Staking is below:

((odds-1) * (percentage estimate)) – (1-percent estimate)/(odds-1) X 100

So here, ((10-1) * (40%)) – (1 – 40%)/ (10-1) x 100 yields about 34%.

First, some basics:

- Your fund must have recycle provisions which allow you to continue investing as you get returns.

- The outcome of this bet is assumed to have no relationship to any other bet you make.

Kelly results:

- Assuming a $1,000 fund, according to the Kelly criterion the optimal bet is about 34% of your capital, or $340.00.

- The fund is expected to grow, on average, by about 34.33% on each bet.

- If you do not bet exactly $340.00, you should bet less than $340.00.

- The Kelly criterion is maximally aggressive — it seeks to increase capital at the maximum rate possible. Most investors typically take a less aggressive approach, and generally won’t bet more than a certain percentage of their fund on any deal. A heuristic measure nonetheless.

- A common strategy is to wager half the Kelly amount, which in this case would be $170.00.

- If your estimated probability of 40% is too high, you will bet too much and lose over time. Make sure you are using a conservative (low) estimate.