We have all seen the studies — some American workers spend upwards of six hours a day handling email. It’s not a great use of time, it destroys productivity and it ultimately costs businesses money. A new paper written by a team Salesforce MetaMind researchers could eventually provide summaries of professional communication. More effective text summarization tools would unlock serious value for Salesforce users — if the research community can finish working out the kinks.

Using machine learning to produce text summaries is not easy, particularly when you’re dealing with very long blocks of texts. Methods that simply draw on the language of the source text to produce summaries are not very flexible and methods that generate completely new language often generate incoherent sentences.

Salesforce attempts to improve the accuracy of doing the later, generating summaries with fresh language. The team’s modifications to standard practice include the addition of reinforcement learning and methods for reducing repetitive language and increasing the amount of context available to maximize accuracy.

An example summary generated by Salesforce

With reinforcement learning, an optimal behavior is established — in this case maximizing accuracy as measured by a formalized test. The model is then asked to return successive summaries and each time the model receives an accuracy score, it adapts in an effort to receive a higher score the next time.

A simple way to think about this is to imagine a situation where you had the opportunity to take a practice exam in college with unlimited retakes. Each time you take the practice exam you modify your study strategy with the hope that you will maximize your outcome on the real exam. A human probably would only need a few attempts to get it right, but a machine needs considerably more for trial and error.

Reinforcement learning is gradually becoming more common for tasks requiring language generation. Beyond reinforcement, the modified model also uses contextual information from the source document to aid in the generation of relevant new language and to reduce duplicated phrasing.

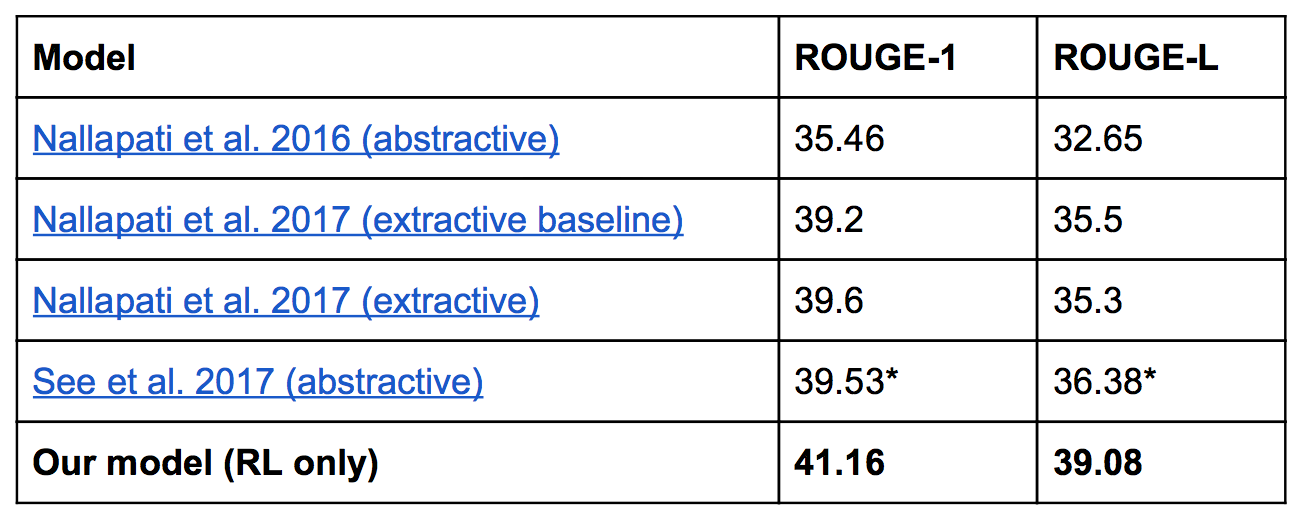

Salesforce tried out its approach on the ROUGE test, short for Recall-Oriented Understudy for Gisting Evaluation. ROUGE is a collection of tests that enable fast analysis of the accuracy of a generated summary.

Salesforce tried out its approach on the ROUGE test, short for Recall-Oriented Understudy for Gisting Evaluation. ROUGE is a collection of tests that enable fast analysis of the accuracy of a generated summary.

The tests compare snippets of generated summaries with snippets from accepted summaries. Variations of the test just attempt to match snippets of different lengths. Salesforce outperformed previous attempts with two to three point gains. This might not seem like much, but in the world of machine learning that’s fairly significant.

As with all research, it’s not quite ready for prime time yet. But the work is indicative of a few things. In case it wasn’t already obvious, Salesforce is serious about applying machine intelligence to the CRM. And one of the company’s early priorities is text summarization to support sales.