Livestreaming app Periscope is rolling out a new experiment with real-time comment moderation, the company announced today. While its parent company Twitter has struggled over the years with spam and abuse — without much success, let’s be honest — Periscope is aiming to go a different route with the introduction of a community-policed system where users can report and moderate comments as soon as they appear on the screen.

Until today, viewers have been able to type in a text entry box in the Periscope app, then see their comments overlaid on the live video stream during the broadcast. As others added their comments, the older ones would float off the screen.

However, in terms of managing harassment and abuse, Periscope only offered a set of tools similar to Twitter — that is, users could report abuse via in-app mechanisms or block individual users. You could also restrict comments only to people you know, but this is less desirable for those interested in engaging with a wider, more public community on the app.

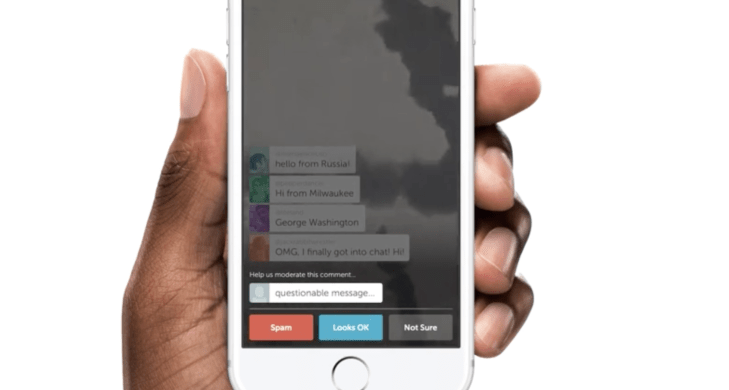

With the new system, Periscope viewers can report comments as spam or abuse which will cause the individual comment to disappear from their screen immediately, and will prevent them from seeing other messages from that same person during the broadcast. As a result of being flagged, Periscope will then randomly select a few other viewers to vote on whether or not they also agree the comment is spam or abuse or if it looks okay.

If the majority of viewers indicate the comment is spam or abuse, the commenter is notified their ability to chat is being disabled temporarily. If they again have a comment flagged during the broadcast, they lose the ability to chat for the duration of the livestream.

By asking a random group of online users to confirm if the comment is spam or abusive, Periscope could potentially cut down on community-based attempts at censoring unwelcome viewpoints. That is, if the broadcast is on a divisive topic, and community voting was not involved, someone could target those users with differing ideas by flagging their comments. But instead, if the online community doesn’t agree that a comment is spam or abuse, the comment’s poster should remain unaffected.

Whether or not comments are moderated is up to the broadcaster, and viewers can also choose to opt out of voting via their Settings, the company notes.

What’s interesting about this launch is that it introduces a fairly simple system for managing bad actors on the service — something that Twitter itself could learn a thing or two from, in fact. Twitter is also a real-time platform, and has often been the first source for a number of breaking news stories over the years.

But when events are shared instantaneously, that leaves little time for the company itself to react to reports of abuse or obscene or graphic content. Automated tools like this new voting system in Periscope could help.

The launch also comes at a time when the app has been under fire for serving up livestreams of extremely disturbing content, including one woman’s stream of her suicide by train, the premeditated assault of a man by two teens in France and the livestream of an Ohio teen’s rape, for example. Meanwhile, a video posted on Twitter showed a gang rape victim in Brazil laying naked and unconscious — something that has now led to protests in the country, as citizens marched on the Supreme Court.

While seeing a graphic incident like this is not common, they do pose a much greater challenge for platforms like Persicope, where users don’t have to sign up using real names. Instead, Persicope users can choose to sign up with a phone number or a Twitter account — the latter of which is already fairly anonymous as it only requires an email or phone number, too. By obfuscating a user’s true identity, these public platforms invite bad behavior.

That being said, we understand that Twitter is not considering implementing this same system on its site, as tweets are not the same as Periscope comments — that is, they’re not real-time and ephemeral. Twitter doesn’t believe that tweets require immediate and actionable moderation. We’re not so sure about that.

The new comment moderation system is rolling out now, via an app update.