Around ten years ago, Jules Urbach was sitting at the table at his mom’s house, coding away, when there was a knock at the door. His mom answered, but the visitor was for him. Directors J.J. Abrams and David Fincher had some questions about a rendering technology Urbach was working on, which had the potential to make movie scenes look much more realistic with shorter load times.

Abrams and Fincher were being prescient. What Urbach was building would go on to be used in films like The Avengers and commercials for Transformers. It also made him enough money so that he could move out of his mom’s place.

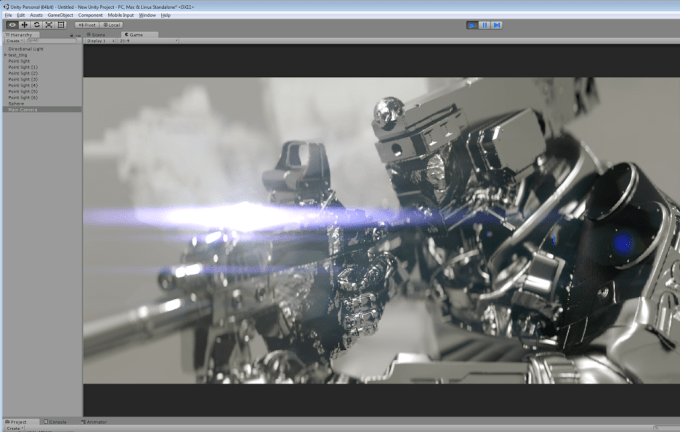

Urbach started a company called OTOY, short for Online Toys, in 2009. OTOY specializes in rendering complex 3D environments using cloud-based servers, making it making it easier — and faster — to build scenes for video games and movies. And now, OTOY now also integrates with Unity and Unreal Engine — two of the biggest tools used by game developers in the world.

These kinds of integrations all help companies offload the complex technological aspects of things like rendering games, making it easier to focus on the gameplay and art assets instead. Octane 3, the company’s tool, can export those Unreal Engine and Unity applications directly to the cloud, and they can be easily shared on the web. And those apps can be streamed to virtual reality devices like the Gear VR.

Normally all of this takes a tremendous amount of time to render properly, but Urbach’s goal was to cut that down to a few seconds. He did that by finding a way to transfer the rendering technology to GPUs, increasing the speed at which it worked — and slicing the time required to render those scenes to a fraction of what it used to be. And to do all this, OTOY has raised more than $50 million in financing over the course of its lifetime.

“It’s like Photoshop, everyone understands how light in the real world works,” Urbach said. “You don’t need to do tricks or be a visual effects studio to master and get it look real, it looks real — it’s all physics. My goal was to get the tech on the rendering side to be done and ready to where we could take on projects like [Transformers] and do it at a fraction of the cost, because [Octane 3] can render it like a video game.”

Here’s an example of how the technology works: imagine taking a mesh of a forest where a video game character is walking around. To render all the lighting properly — and make the forest look lifelike, especially as a character walks around — normally takes a tremendous amount of time and computing, and is incredibly difficult to do in real time. But the goal of Octane is to essentially make it possible to look at it from all angles and have the lighting, shading and visuals of it looking correctly and lifelike in real-time.

Urbach says by switching those processes over to a GPU speeds up the rendering time by anywhere from 10 times to 40 times faster. All this helps scenes and environments essentially render in real time, rather than having to record those scenes frame-by-frame. The advantage of all of this is that it can be rendered on remote servers like Amazon’s AWS, which allows game developers or artists to scale up and down what they need. (The company has a few demos running on its site.)

Should it be successful, this kind of technology would be a holy grail of virtual reality and augmented reality. All of that rendering has to basically be done in real time as a user pans his or her head around and looks at all angles of the environment surrounding them. In a perfect world, Urbach says he is building the holodeck from Star Trek — a real-time holographic environment that shifts and changes with the way the person is looking. Matterport, for example, can capture the inside of an apartment and use Octane to correctly render the lighting on things like furniture as a user pans around and looks at various parts of the apartment.

“This is something I had spent all these years in college trying to master with a paintbrush, he mastered with an algorithm,” co-founder Alissa Grainger said. “When we met him he had these renderings of a face in his mom’s living room on the coffee table. He was rendering skin the exact same way [I did when] I was a portrait artist [using watercolor].”

With all this, the technology can essentially provide a mixed environment — both pre-rendered and streaming — for developers. For example, the forest can be pre-rendered and saved on the device, but as people move around, the changes can be made on cloud servers and then streamed, making the experience look life-like and in real time.

Urbach started on the path after he first played Dragons Lair, an interactive cartoon game that is basically a film that requires some controller input to determine what the hero’s next action will be. If a player puts in the wrong input, the hero Dirk ends up killed by a squid or dropping to his death. Each time, however, the scene was previously animated — making it feel like a live cartoon throughout the game. That sort of live entertainment fascinated Urbach, and he wanted to build the technology himself.

Urbach started on the path after he first played Dragons Lair, an interactive cartoon game that is basically a film that requires some controller input to determine what the hero’s next action will be. If a player puts in the wrong input, the hero Dirk ends up killed by a squid or dropping to his death. Each time, however, the scene was previously animated — making it feel like a live cartoon throughout the game. That sort of live entertainment fascinated Urbach, and he wanted to build the technology himself.

“If you played that thing in ’83 the way I did, it blew my mind in ways that very few things have done since then,” he said. “You can have a video game that looks absolutely perfect, that’s the holy grail. I basically kept asking my mom to buy me an arcade machine, it was probably thousands of dollars. It was too expensive, so I said, ‘I’m gonna build this thing myself.”

Historically, the company sold an application, but recently shifted to also moving toward offering a subscription-based model, much like other publishing services like Photoshop. That helped the company lured Autodesk as an investor, Urbach said.

For the most part, OTOY’s business has been funded by its Hollywood endeavors — such as working on films like The Avengers and commercials for Transformers. Part of that is no doubt helped by the company’s connection with Ari Emmanuel, an icon in the entertainment industry, who sits on the company’s board of advisors. Urbach’s first task was building marketing materials for Transformers — which he used as a testing ground for his technology.

“I remember being told this Transformers [film] model, it takes 40 hours to render one frame, and I was able to get really good quality and I was rendering at 60 frames per second at 4K,” Urbach said. “What’s happened in that time, the quality we have in Octane is pretty much better than what the final output of those films in 2006.”

Still, there are still significant hurdles — as typified by the bumpy road encountered by predecessors in the game streaming space. The canonical one is OnLive, a video game service that streamed games like YouTube videos to computers that were fully playable — on a good Internet connection at least. Gaikai is another example, but perhaps a brighter one. Shortly after emerging, it was promptly snapped up by Sony, and its technology still exists in the form of PlayStation Now, a subscription-based gaming service similar to OnLive. OnLive could have been slightly too early to properly capitalize on its technology, but OTOY is pushing boundaries too.

Abrams still checks in periodically with Urbach, and his mom, to find out how things are going.

“That was a big validator, I’ve never met a big director like [David] Fincher, they were incredibly interested,” he said. “I really credit those two guys helping me pursue this and push it further, there’s a future if it helps a filmmaker like Abrams [is excited about what you’re working on].”