Since its commercial birth in the 1950s as a technological oddity at a science fair, gaming has blossomed into one of the most profitable entertainment industries in the world.

The mobile technology boom in recent years has revolutionized the industry and opened the doors to a new generation of gamers. Indeed, gaming has become so integrated with modern popular culture that now even grandmas know what Angry Birds is, and more than 42 percent of Americans are gamers and four out of five U.S. households have a console.

The Early Years

The first recognized example of a game machine was unveiled by Dr. Edward Uhler Condon at the New York World’s Fair in 1940. The game, based on the ancient mathematical game of Nim, was played by about 50,000 people during the six months it was on display, with the computer reportedly winning more than 90 percent of the games.

However, the first game system designed for commercial home use did not emerge until nearly three decades later, when Ralph Baer and his team released his prototype, the “Brown Box,” in 1967.

The “Brown Box” was a vacuum tube-circuit that could be connected to a television set and allowed two users to control cubes that chased each other on the screen. The “Brown Box” could be programmed to play a variety of games, including ping pong, checkers and four sports games. Using advanced technology for this time, added accessories included a lightgun for a target shooting game, and a special attachment used for a golf putting game.

According to the National Museum of American History, Baer recalled, “The minute we played ping-pong, we knew we had a product. Before that we weren’t too sure.”

The “Brown Box” was licensed to Magnavox, which released the system as the Magnavox Odyssey in 1972. It preceded Atari by a few months, which is often mistakenly thought of as the first games console.

The “Brown Box” was licensed to Magnavox, which released the system as the Magnavox Odyssey in 1972. It preceded Atari by a few months, which is often mistakenly thought of as the first games console.

Between August 1972 and 1975, when the Magnavox was discontinued, around 300,000 consoles were sold. Poor sales were blamed on mismanaged in-store marketing campaigns and the fact that home gaming was a relatively alien concept to the average American at this time.

However mismanaged it might have been, this was the birth of the digital gaming we know today.

Onward To Atari And Arcade Gaming

Sega and Taito were the first companies to pique the public’s interest in arcade gaming when they released the electro-mechanical games Periscope and Crown Special Soccer in 1966 and 1967. In 1972, Atari (founded by Nolan Bushnell, the godfather of gaming) became the first gaming company to really set the benchmark for a large-scale gaming community.

The nature of the games sparked competition among players, who could record their high scores … and were determined to mark their space at the top of the list.

Atari not only developed their games in-house, they also created a whole new industry around the “arcade,” and in 1973, retailing at $1,095, Atari began to sell the first real electronic video game Pong, and arcade machines began emerging in bars, bowling alleys and shopping malls around the world. Tech-heads realized they were onto a big thing; between 1972 and 1985, more than 15 companies began to develop video games for the ever-expanding market.

The Roots Of Multiplayer Gaming As We Know It

During the late 1970s, a number of chain restaurants around the U.S. started to install video games to capitalize on the hot new craze. The nature of the games sparked competition among players, who could record their high scores with their initials and were determined to mark their space at the top of the list. At this point, multiplayer gaming was limited to players competing on the same screen.

The first example of players competing on separate screens came in 1973 with “Empire” — a strategic turn-based game for up to eight players — which was created for the PLATO network system. PLATO (Programmed Logic for Automatic Teaching Operation), was one of the first generalized computer-based teaching systems, originally built by the University of Illinois and later taken over by Control Data (CDC), who built the machines on which the system ran.

According to usage logs from the PLATO system, users spent about 300,000 hours playing Empire between 1978 and 1985. In 1973, Jim Bowery released Spasim for PLATO — a 32-player space shooter — which is regarded as the first example of a 3D multiplayer game. While access to PLATO was limited to large organizations such as universities — and Atari — who could afford the computers and connections necessary to join the network, PLATO represents one of the first steps on the technological road to the Internet, and online multiplayer gaming as we know it today.

At this point, gaming was popular with the younger generations, and was a shared activity in that people competed for high-scores in arcades. However, most people would not have considered four out of every five American households having a games system as a probable reality.

Home Gaming Becomes A Reality

In addition to gaming consoles becoming popular in commercial centers and chain restaurants in the U.S., the early 1970s also saw the advent of personal computers and mass-produced gaming consoles become a reality. Technological advancements, such as Intel’s invention of the world’s first microprocessor, led to the creation of games such as Gunfight in 1975, the first example of a multiplayer human-to-human combat shooter.

While far from Call of Duty, Gunfight was a big deal when it first hit arcades. It came with a new style of gameplay, using one joystick to control movement and another for shooting direction — something that had never been seen before.

As home gaming and arcade gaming boomed, so too did the development of the gaming community.

In 1977, Atari released the Atari VCS (later known as the Atari 2600), but found sales slow, selling only 250,000 machines in its first year, then 550,000 in 1978 — well below the figures expected. The low sales have been blamed on the fact that Americans were still getting used to the idea of color TVs at home, the consoles were expensive and people were growing tired of Pong, Atari’s most popular game.

When it was released, the Atari VCS was only designed to play 10 simple challenge games, such as Pong, Outlaw and Tank. However, the console included an external ROM slot where game cartridges could be plugged in; the potential was quickly discovered by programmers around the world, who created games far outperforming the console’s original designed.

The integration of the microprocessor also led to the release of Space Invaders for the Atari VCS in 1980, signifying a new era of gaming — and sales: Atari 2600 sales shot up to 2 million units in 1980.

The integration of the microprocessor also led to the release of Space Invaders for the Atari VCS in 1980, signifying a new era of gaming — and sales: Atari 2600 sales shot up to 2 million units in 1980.

As home and arcade gaming boomed, so too did the development of the gaming community. The late 1970s and early 1980s saw the release of hobbyist magazines such as Creative Computing (1974), Computer and Video Games (1981) and Computer Gaming World (1981). These magazines created a sense of community, and offered a channel by which gamers could engage.

Personal Computers: Designing Games And Opening Up To A Wider Community

The video game boom caused by Space Invaders saw a huge number of new companies and consoles pop up, resulting in a period of market saturation. Too many gaming consoles, and too few interesting, engaging new games to play on them, eventually led to the 1983 North American video games crash, which saw huge losses, and truckloads of unpopular, poor-quality titles buried in the desert just to get rid of them. The gaming industry was in need of a change.

At more or less the same time that consoles started getting bad press, home computers like the Commodore Vic-20, the Commodore 64 and the Apple II started to grow in popularity. These new home computer systems were affordable for the average American, retailing at around $300 in the early 1980s (around $860 in today’s money), and were advertised as the “sensible” option for the whole family.

These home computers had much more powerful processors than the previous generation of consoles; this opened the door to a new level of gaming, with more complex, less linear games. They also offered the technology needed for gamers to create their own games with BASIC code. Even Bill Gates designed a game, called Donkey (a simple game that involved dodging donkeys on a highway while driving a sports car). Interestingly, the game was brought back from the dead as an iOS app back in 2012.

While the game was described at the time as “crude and embarrassing” by rivals at Apple, Gates included the game to inspire users to develop their own games and programs using the integrated BASIC code program.

Magazines like Computer and Video Games and Gaming World provided BASIC source code for games and utility programs, which could be typed into early PCs. Games, programs and readers’ code submissions were accepted and shared.

In addition to providing the means for more people to create their own game using code, early computers also paved the way for multiplayer gaming, a key milestone for the evolution of the gaming community.

Early computers such as the Macintosh, and some consoles such as the Atari ST, allowed users to connect their devices with other players as early as the late 1980s. In 1987, MidiMaze was released on the Atari ST and included a function by which up to 16 consoles could be linked by connecting one computer’s MIDI-OUT port to the next computer’s MIDI-IN port.

While many users reported that more than four players at a time slowed the game dramatically and made it unstable, this was the first step toward the idea of a deathmatch, which exploded in popularity with the release of Doom in 1993 and is one of the most popular types of games today.

The real revolution in gaming came when LAN networks, and later the Internet, opened up multiplayer gaming.

Multiplayer gaming over networks really took off with the release of Pathway to Darkness in 1993, and the “LAN Party” was born. LAN gaming grew more popular with the release of Marathon on the Macintosh in 1994 and especially after first-person multiplayer shooter Quake hit stores in 1996. By this point, the release of Windows 95 and affordable Ethernet cards brought networking to the Windows PC, further expanding the popularity of multiplayer LAN games.

The real revolution in gaming came when LAN networks, and later the Internet, opened up multiplayer gaming. Multiplayer gaming took the gaming community to a new level because it allowed fans to compete and interact from different computers, which improved the social aspect of gaming. This key step set the stage for the large-scale interactive gaming that modern gamers currently enjoy. On April 30, 1993, CERN put the World Wide Web software in the public domain, but it would be years before the Internet was powerful enough to accommodate gaming as we know it today.

Image: Wikimedia Commons/Evan-Amos

Image: Wikimedia Commons/Evan-Amos

The Move To Online Gaming On Consoles

Long before gaming giants Sega and Nintendo moved into the sphere of online gaming, many engineers attempted to utilize the power of telephone lines to transfer information between consoles.

William von Meister unveiled groundbreaking modem-transfer technology for the Atari 2600 at the Consumer Electronics Show (CES) in Las Vegas in 1982. The new device, the CVC GameLine, enabled users to download software and games using their fixed telephone connection and a cartridge that could be plugged in to their Atari console.

The device allowed users to “download” multiple games from programmers around the world, which could be played for free up to eight times; it also allowed users to download free games on their birthdays. Unfortunately, the device failed to gain support from the leading games manufacturers of the time, and was dealt a death-blow by the crash of 1983.

Real advances in “online” gaming wouldn’t take place until the release of 4th generation 16-bit-era consoles in the early 1990s, after the Internet as we know it became part of the public domain in 1993. In 1995 Nintendo released Satellaview, a satellite modem peripheral for Nintendo’s Super Famicom console. The technology allowed users to download games, news and cheats hints directly to their console using satellites. Broadcasts continued until 2000, but the technology never made it out of Japan to the global market.

Accessing the Internet was expensive at the turn of the millennium.

Between 1993 and 1996, Sega, Nintendo and Atari made a number of attempts to break into “online” gaming by using cable providers, but none of them really took off due to slow Internet capabilities and problems with cable providers. It wasn’t until the release of the Sega Dreamcast, the world’s first Internet-ready console, in 2000, that real advances were made in online gaming as we know it today. The Dreamcast came with an embedded 56 Kbps modem and a copy of the latest PlanetWeb browser, making Internet-based gaming a core part of its setup rather than just a quirky add-on used by a minority of users.

The Dreamcast was a truly revolutionary system, and was the first net-centric device to gain popularity. However, it also was a massive failure, which effectively put an end to Sega’s console legacy. Accessing the Internet was expensive at the turn of the millennium, and Sega ended up footing huge bills as users used its PlanetWeb browser around the world.

Experts related the console’s failure to the Internet-focused technology being ahead of its time, as well as the rapid evolution of PC technology in the early 2000s — which led people to doubt the use of a console designed solely for gaming. Regardless of its failure, Dreamcast paved the way for the next generation of consoles, such as the Xbox. Released in the mid-2000s, the new console manufacturers learned from and improved the net-centric focus of the Dreamcast, making online functionality an integral part of the gaming industry.

The release of Runescape in 2001 was a game changer. MMORPG (massively multiplayer online role-playing games) allows millions of players worldwide to play, interact and compete against fellow fans on the same platform. The games also include chat functions, allowing players to interact and communicate with other players whom they meet in-game. These games may seem outdated now, but they remain extremely popular within the dedicated gaming community.

The Modern Age Of Gaming

Since the early 2000s, Internet capabilities have exploded and computer processor technology has improved at such a fast rate that every new batch of games, graphics and consoles seems to blow the previous generation out of the water. The cost of technology, servers and the Internet has dropped so far that Internet at lightning speeds is now accessible and commonplace, and 3.2 billion people across the globe have access to the Internet. According to the ESA Computer and video games industry report for 2015, at least 1.5 billion people with Internet access play video games.

Online storefronts such as Xbox Live Marketplace and the Wii Shop Channel have totally changed the way people buy games, update software and communicate and interact with other gamers, and networking services like Sony’s PSN have helped online multiplayer gaming reach unbelievable new heights.

Every new batch of games, graphics and consoles seems to blow the previous generation out of the water.

Technology allows millions around the world to enjoy gaming as a shared activity. The recent ESA gaming report showed that 54 percent of frequent gamers feel their hobby helps them connect with friends, and 45 percent use gaming as a way to spend time with their family.

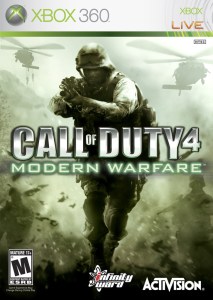

By the time of the Xbox 360 release, online multiplayer gaming was an integral part of the experience (especially “deathmatch” games played against millions of peers around the world for games such as Call of Duty Modern Warfare). Nowadays, many games have an online component that vastly improves the gameplay experience and interactivity, often superseding the importance of the player’s offline game objectives.

By the time of the Xbox 360 release, online multiplayer gaming was an integral part of the experience (especially “deathmatch” games played against millions of peers around the world for games such as Call of Duty Modern Warfare). Nowadays, many games have an online component that vastly improves the gameplay experience and interactivity, often superseding the importance of the player’s offline game objectives.

“What I’ve been told as a blanket expectation is that 90% of players who start your game will never see the end of it…” says Keith Fuller, a longtime production contractor for Activision.

As online first-person shooter games became more popular, gaming “clans” began to emerge around the world. A clan, guild or faction is an organized group of video gamers that regularly play together in multiplayer games. These games range from groups of a few friends to 4,000-person organizations with a broad range of structures, goals and members. Multiple online platforms exist, where clans are rated against each other and can organize battles and meet-ups online.

The Move Toward Mobile

Since smartphones and app stores hit the market in 2007, gaming has undergone yet another rapid evolution that has changed not only the way people play games, but also brought gaming into the mainstream pop culture in a way never before seen. Rapid developments in mobile technology over the last decade have created an explosion of mobile gaming, which is set to overtake revenue from console-based gaming in 2015.

This huge shift in the gaming industry toward mobile, especially in Southeast Asia, has not only widened gaming demographics, but also pushed gaming to the forefront of media attention. Like the early gaming fans joining niche forums, today’s users have rallied around mobile gaming, and the Internet, magazines and social media are full of commentaries of new games and industry gossip. As always, gamers’ blogs and forums are filled with new game tips, and sites such as Macworld, Ars Technica and TouchArcade push games from lesser-known independent developers, as well as traditional gaming companies.

This huge shift in the gaming industry toward mobile, especially in Southeast Asia, has not only widened gaming demographics, but also pushed gaming to the forefront of media attention. Like the early gaming fans joining niche forums, today’s users have rallied around mobile gaming, and the Internet, magazines and social media are full of commentaries of new games and industry gossip. As always, gamers’ blogs and forums are filled with new game tips, and sites such as Macworld, Ars Technica and TouchArcade push games from lesser-known independent developers, as well as traditional gaming companies.

The gaming industry was previously monopolized by a handful of companies, but in recent years, companies such as Apple and Google have been sneaking their way up the rankings due to their games sales earnings from their app stores. The time-killing nature of mobile gaming is attractive to so many people who basic games such as Angry Birds made Rovio $200 million in 2012 alone, and broke two billion downloads in 2014.

More complex mass multiplayer mobile games such as Clash of Clans are bringing in huge sums each year, connecting millions of players around the world through their mobile device or League of Legends on the PC.

The Future

The move to mobile technology has defined the recent chapter of gaming, but while on-the-move gaming is well-suited to the busy lives of millennials, gaming on mobile devices also has its limitations. Phone screens are small (well, at least until the iPhone 6s came out), and processor speeds and internal memories on the majority of cellphones limit gameplay possibilities. According to a recent VentureBeat article, mobile gaming is already witnessing its first slump. Revenue growth has slowed, and the cost of doing business and distribution costs have risen dramatically over the last few years.

Although mobile gaming has caused the death of hand-held gaming devices, consoles are still booming, and each new generation of console welcomes a new era of technology and capabilities. Two industries that could well play a key role in the future of gaming are virtual reality and artificial intelligence technology.

The next chapter for gaming is still unclear, but whatever happens, it is sure to be entertaining.

Virtual reality (VR) company Oculus was acquired by Facebook in 2014, and is set to release its Rift headset in 2016. The headset seems to lean perfectly toward use within the video games industry, and would potentially allow gamers to “live” inside an interactive, immersive 3D world. The opportunities to create fully interactive, dynamic “worlds” for MMORPG, in which players could move around, interact with other players and experience the digital landscapes in a totally new dimension, could be within arms reach.

There have been a lot of advancements over the last few years in the world of language-processing artificial intelligence. In 2014, Google acquired Deep Mind; this year, IBM acquired AlchemyAPI, a leading provider in deep-learning technology; in October 2015, Apple made two AI acquisitions in less than a week. Two of the fields being developed are accuracy for voice recognition technology and open-ended dialogue with computers.

These advances could signify an amazing new chapter for gaming — especially if combined with VR, as they could allow games to interact with characters within games, who would be able to respond to questions and commands, with intelligent and seemingly natural responses. In the world of first-person shooters, sports games and strategy games, players could effectively command the computer to complete in-game tasks, as the computer would be able to understand commands through a headset due to advances in voice recognition accuracy.

If the changes that have occurred over the last century are anything to go by, it appears that gaming in 2025 will be almost unrecognizable to how it is today. Although Angry Birds has been a household name since its release in 2011, it is unlikely to be remembered as fondly as Space Invaders or Pong. Throughout its progression, gaming has seen multiple trends wane and tide, then be totally replaced by another technology. The next chapter for gaming is still unclear, but whatever happens, it is sure to be entertaining.