“The epicenter of change.’

That’s Apple’s slogan for this year’s Worldwide Developers Conference (WWDC), and it resonates with me in a special way.

Event after event, Tim Cook makes a point to remind the audience that Apple’s ethos is to build best-of-breed products that change people’s lives. I believe Cook to be sincere for no other reason than the work the company does in supporting accessibility. In iOS and OS X. Apple has made good on their word to changing people’s lives by continually improving the tools that, quite literally, change the way a person with disabilities (such as myself) uses an iPhone and iPad.

But it isn’t only Apple who’s doing good. Third-party developers have a responsibility to incorporate accessibility into their apps as well, and that’s where WWDC comes in. Apple provides numerous resources to developers during the conference that help he or she ensure that their app(s) are as accessible as possible.

The accessibility presence at WWDC is deep and far-reaching; Apple does much to raise awareness of and advocate for the accessibility community. Apple this week granted me behind-the-scenes access to sessions, labs, and developer interviews at Moscone so as to tell WWDC’s accessibility story.

Sessions

Arguably the most important aspect of WWDC from a developer perspective are the sessions. This is where Apple engineers present information about APIs and demonstrate how developers can integrate them into their apps. As there are sessions dedicated to things like user interface design and privacy, so too are there ones for accessibility.

Third-party developers have a responsibility to incorporate accessibility into their apps as well.

Sessions are helmed by a member of the respective platform’s Accessibility team. There are dedicated sessions for iOS, OS X, and new for 2015, Watch OS. In broad strokes, the accessibility sessions are about best practices for supporting accessibility. The presenter focuses on an attribute of Accessibility like, say, VoiceOver, and demonstrate how to properly integrate it into an app, making it work with labels and images. Troubleshooting techniques and tips and tricks are also discussed.

The technical aspects of the sessions related to coding went largely over my head, but I did learn a few things that I didn’t know before. For instance, some UI mechanics of Apple Watch related to the digital crown and the Zoom feature, where a user can turn the crown to move line by line when reading text with Zoom turned on.

Framework Labs

As with the sessions, the Frameworks Labs are an integral part of a developer’s experience at WWDC. Here, developers can meet with Apple engineers to get help and ask questions about their app. Developers can demo their app to engineers, and get real-time feedback on what works and what doesn’t. Engineers are even able to look at and make suggestions for an app’s code so as to make it work as it should.

These Frameworks Labs extend to accessibility as well. Members of Apple’s Engineering and Accessibility QA teams are on hand to answer developers’ questions about supporting Accessibility. As well, there is opportunity for “accessibility audits,” where an engineer will sit down with a developer’s app and give feedback. It is during these times where an engineer will determine whether a feature such as VoiceOver is functionally properly, and give tips as needed.

As a person with disabilities, it’s thrilling for me to see others make concerted efforts to ensure that their apps are usable by all.

Apple told me that press access to the labs was unprecedented, so I made sure to soak up every second of my time there. It was a bustling place, with people huddled at tables chattering about things like VoiceOver and Large Dynamic Type.

To me, the labs were one of the most exciting part of my visit. It seemed to me that the labs are where the action is at WWDC. Developers want to visit the labs because they’ve gone to sessions and want to implement accessibility (among other things) the right way.

The room was full of enthusiasm, which warmed my heart to see. As a person with disabilities, it’s thrilling for me to see others make concerted efforts to ensure that their apps are usable by all.

Interviews With Developers

I had an opportunity to speak with several developers, and my high-level take of our discussions is this: they care — deeply so.

What I mean by “care” is the developers I spoke to shared genuine feelings of empathy and a desire to change the world for the better for people like me. They want everyone with an iOS device and/or Apple Watch to enjoy their apps just as much as the fully-abled do.

Of the many developers I spoke with, there were three conversations that especially stood out.

Workflow

First is the team behind the iOS automation app, Workflow. I’ve known about Workflow for a while and have it on my devices, but have mostly ignored it due to being intimidated by its power. Still, I jumped at the chance to sit down with Workflow’s three-person creative team: Ari Weinstein, Conrad Kramer, and Nick Frey.

In terms of accessibility, Workflow is noteworthy insofar that it’s the first app to win an Apple Design Award for accessibility. (Of note, Apple’s made the ADA ceremony available online. At the 35:00 mark is the Workflow demo, done by two Apple accessibility engineers who are blind.)

Weinstein and his team gave me a demo of their app, and explained to me that their focus, accessibility-wise, was on the visually impaired. Workflow uses VoiceOver to guide users in creating workflows by announcing selected actions and where they’re supposed to be placed in a sequence. It works very well.

Weinstein explained to me that the impetus for supporting VoiceOver was the comments he and his team received from the accessibility community. He said that many users with vision impairments were curious about Workflow and wanted to try it, and asked that the app be made accessible to them. Moreover, Weinstein stated that supporting accessibility felt like the right thing to do.

What strikes me about Workflow is not only its capability but that capability is available to the visually impaired. Here’s an app that can automate many tasks, which not only is convenient but practical. For many visually impaired users, getting directions home, for example, can potentially be cumbersome because of the visual strain required to orient one’s self to the Maps interface — e.g., find the correct buttons to tap, etc. Workflow lets you do this with just one tap.

AssistiveWare

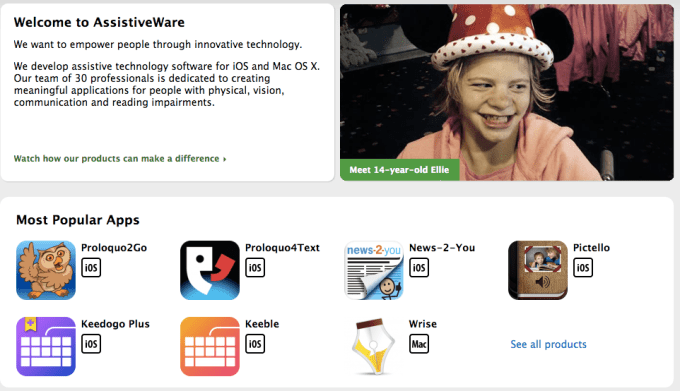

I met with AssistiveWare founder & CEO, David Niemeijer, who demoed two of his company’s apps to me, Keedogo and Proloquo2Go.

Keedogo is a iPad keyboard designed for elementary-aged children, and has many accessible traits. (I wrote about Keedogo in more detail for MacStories last year.) Proloquo2go, also for iPad, is an app designed for non-verbal children, particularly those with autism. It’s based on the PECS system, a teaching methodology designed specifically for children with autism in order to facilitate expressive language.

It was obvious from speaking with David that he is invested in the welfare of those who use his products. He, too, wants to make a difference in the lives of people of all abilities and needs. Something David said that has stuck with me is the fact that the tools he builds are only possible because Apple built the developer frameworks in the first place. Apple’s tools, he said, are so good that it makes his job making stuff like Proloquo2go that much easier.

Ludwig Project

During Monday’s keynote, Apple showed a video featuring various developers and their apps, while also discussing the impact apps have had on the world and the iOS ecosystem.

I had a chance to sit down with one of the developers in the film, Raphael Augusto Silva. Silva is the co-founder and lead developer at the Ludwig Project.

His app, an iPad piano, stands out because he and his team wish to bring music to the hearing impaired. The idea, Silva told me, is that the hearing impaired can sense music through vibration. Tapping the keys on the iPad’s screen will register a tactile sensation on the attached wrist strap; the more taps, the more you feel. The concept is that the hearing impaired will be able to experience beats through this form of haptic feedback.

While imperfect right now, the concept is wonderfully ambitious. As someone who grew up with deaf parents, I am keenly aware of the uniqueness of the deaf community and the issues they face. Thus, Silva’s app is something I was immediately drawn to, and it was terrific talking with him.

Closing Thoughts

The comment from Cook about not always doing things for “the bloody ROI” feels not at all like an empty bromide.

My experiences at Moscone this week serves as further confirmation that Apple isn’t about lip service in this regard. While accessibility is and always will be somewhat of a niche, it’s wonderful to see Apple go to such lengths to prioritize accessibility.

We (the accessibility community) are on Apple’s radar, and, in my view at least, it feels great to be acknowledged.

WWDC is the center of the Apple universe — and accessibility plays a key role in its success.