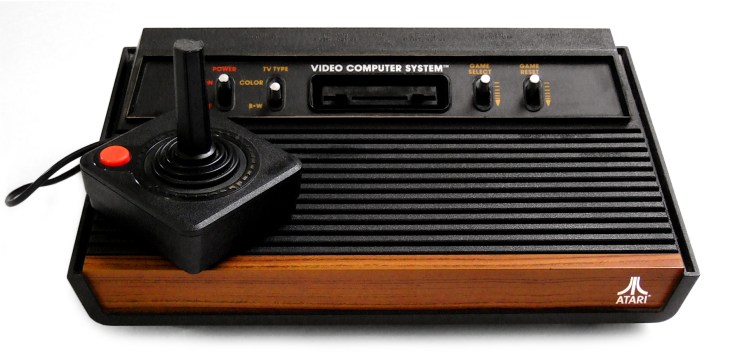

Google has built an artificial intelligence system that can learn – and become amazing at – video games all on its own, given no commands but a simple instruction to play titles. The project, detailed by Bloomberg, is the result of research from the London-based DeepMind AI startup Google acquired in a deal last year, and involves 49 games from the Atari 2600 that likely provided the first video game experience for many of those reading this.

While this is an amazing announcement for so many reasons, the most impressive part might be that the AI not only matched wits with human players in most cases, but actually went above and beyond the best scores of expert meat-based players in 29 of the 49 games it learned, and bested existing computer based players in a whopping 43.

Google and DeepMind aren’t looking to just put their initials atop the best score screens of arcades everywhere with this project – the long-term goal is to create the building blocks for optimal problem solving given a set of criteria, which is obviously useful in any place Google might hope to use AI in the future, including in self-driving cars. Google is calling this the “first time anyone has built a single learning system that can learn directly from experience,” according to Bloomberg, which has potential in a virtually limitless number of applications.

It’s still an early step, however, and Google expects it’ll be decades before it achieves its goal of building general-purpose machines that have their own intelligence and can respond to a range of situations. Still, it’s a system that doesn’t require the kind of arduous training and hand-holding to learn what it’s supposed to do, which is a big leap even from things like IBM’s Watson super computer.

Next up for the arcade AI is mastering the Doom-era 3D virtual worlds, which should help the AI edge closer to mastering similar tasks in the real world, like driving a car. And there’s one more detail here that may keep you up at night: Google trained the AI to get better at the Atari games it mastered using a virtual take on operant conditioning – ‘rewarding’ the computer for successful behavior the way you might a dog.