Metaio is demoing a new possible future in the evolutionary path for input control of wearable computing devices and it should be no surprise that Augmented Reality plays a role.

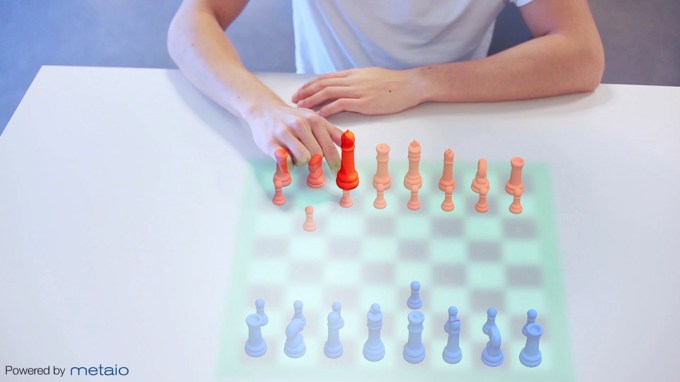

There is a known UI problem with which many software and hardware makers have grappled: What is the best way to interact with the HUD (heads up display) and to control it, for example, if you are looking at a real table with a virtual chessboard on it?

There are several motifs in use today for these kinds of situations. Touching physical buttons or controllers connected to the HUD (like on Oculus Rift or Google Glass), voice command (like on Google Glass), Depth-aware gestures (like on Meta SpaceGlasses), and others.

However, many of these are problematic in that they are either bulky, noisy, unnatural, or that you look like a doofus to the world around you while doing it (e.g. swiping virtual menus in thin air).

The Metaio engineers have a novel concept that attempts to solve this first-world conundrum. It works like this:

Hook up a thermal camera to the device you are wearing and track the heat signature your finger leaves behind on real-world objects you touch. That lingering heat signature can be used to trigger actions in the digital content you see in your HUD, just like a mouse click or a touch.

Watch the video. It has several after-effects enhanced visualizations that show how they ultimately envision the concept working in the future, but it does show the working prototype near the end which is quite interesting.

I’m not going to say that you wouldn’t also look like a doofus punching in virtual key codes or dialing phone numbers on a blank wall, but still, somehow, interacting with real-world objects seems more natural. More human.

The team I spoke with at Metaio thinks the technology is still approximately five years away from being market-ready, but that hasn’t stopped them from building a prototype demonstrating their concept.

They’ve built it into a tablet for now, but the use case is really geared toward HUDs or other interactive eyewear. They will be demoing this prototype at the 2014 Augmented World Expo taking place in Santa Clara, May 27-29.