Public school children have become lab rats of policymakers who are eager to see change faster than we can study what works. Experimental reforms are often founded on the lackluster research of ideological think tanks, who have filled the expertise vacuum left by academics unwilling to conduct policy-related research. “I’ve reviewed some just God awful stuff,” cringes Rutgers Professor Bruce Baker, whose influential data-driven education, blog, schoolfinance101 has helped him become a go-to reviewer for policy reports.

For example, he notes, the libertarian-happy think tank The Reason Foundation concluded that a controversial program to peg funding to student improvement had worked, but forgot to highlight the policy was adopted after the changes had begun. “I started realizing that there’s this never-ending flow of misinformation and disinformation out there,” he said.

Like Nate Silver’s influential and statistically nuanced election forecast blog posts, Baker has gained notoriety for reexamining data to trounce his adversary’s conclusions. And, with Silver’s new independent 538 channel, Baker’s brand of statistics-heavy argument could be the future of education journalism.

Stop Cheerleading Education Miracles, i.e. Education Effects Are Small

“We really have failed in the teaching of mathematics and probability,” decries Baker, who regularly debunks myths about unicorn policy changes that radically improve student outcomes. At scale, experiments rarely move the needle more than a few percentage points. Statisticians measure outcomes in “standard deviations”, or how students move relative to their peers.

A full standard deviation is, on average, going from the back of the pack (33rd percentile) to average (50th percentile). If “Anyone starts saying they’re getting you a half or full standard deviation additional growth–that’s when the bullshit detector starts going off.”

When a new Stanford University study found that Washington D.C.’s controversial pay-for-performance teacher policy had a half-standard deviation impact on quality, newspaper headlines lit up, “Study Finds Gains From Teacher Evaluations” read the New York Times Economix blog.

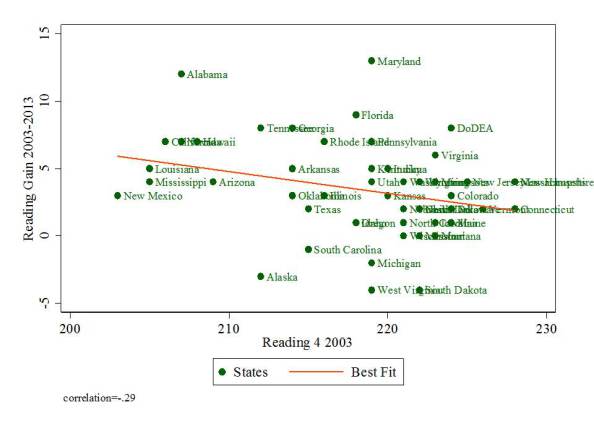

Despite data buried within the reports that show how reform had slightly improved the number of good teachers who didn’t quit, there was virtually no impact on student outcomes. Even worse, it ignored the fact that many other school districts have attempted similar strategies, with widely varying results. Baker put together a blog post soon after, re-organizing all the reform-minded states in relation to their students’ initial starting points on reading and math

In the admittedly ugly graph above, states should be showing great gains (above the red line)–but the effect is mixed. Stanford’s own post was careful to note the researchers’ skepticism, but that was missed by many media reports. This hasn’t stopped some states from adopting radical pay-for-performance schemes.

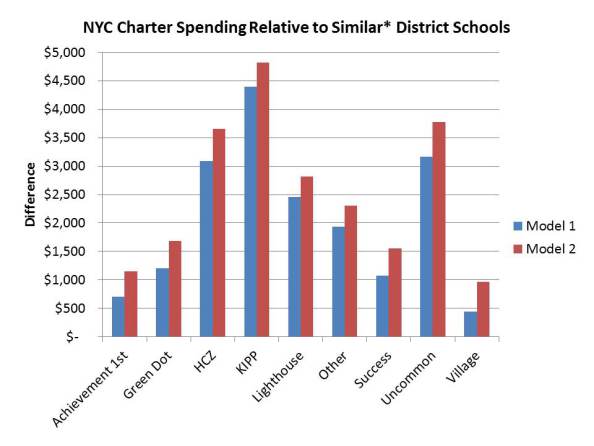

Miracle charters have also been a favorite target for Baker, who finds that many apparent success stories of market-driven schools actually end up spending far more per-pupil than similar public schools.

The report was searing enough to make the popular KIPP charter respond, a debate that added a healthy amount of skepticism to reform discussions in notable education trade journals.

His one mantra in reading education reports: “avoid certainty.”

The Academic Glacier

Academics and news media are on radically different timescales; news cycles last maybe 24-hours, while peer-review publishing shuffles at a comfortable pace of a year or longer for a single paper. To get the most bang for the buck, schools like Stanford are releasing reports to the press before the peer-review process.

“Even with the big research studies that gets released these days, the way to get recognized is by staging a big press release, and putting the study out long before it’s actually peer reviewed and appears in a journal,” says Baker. “Having a response out within a week often times isn’t even fast enough.”

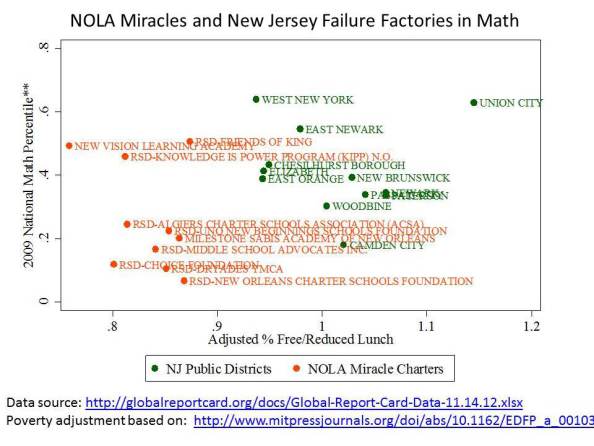

So, Baker combines lightning quick posts with previous academic research. For instance, to debunk a myth that Louisiana’s Charter schools were outperforming New Jersey’s “Failure Factories,” he compared math schools in both areas re-evaluated in a peer-reviewed statistical procedure for accurately assessing poverty rates.

According to Baker, the cost of living varies by state, so we can’t use national averages of income to compare districts. When states are re-weighted by regional poverty, it’s clear that New Jersey is likely doing pretty well, considering the number of poor students they’re dealing with.

The problem is, education is tricky. Nobel Prize-winning economist James Heckman once quipped that they’d love to evaluate students by yearly improvement, but “children do not develop in nine-month chunks except during gestation.”

Unless a study is randomized, rigorously controlled and published in a peer-reviewed journal, arm your bullshit meter if anyone is claiming they’ve found a scalable solution. Until then, follow Baker for his response on hyped-up reform stories. I do.