Augmented Reality pioneers Metaio hardly qualify as a startup these days (being incorporated since 2003 and funded by a stream of project work from the likes of Volkswagen and IKEA), but they behave very much like a startup and are constantly inventing new systems for their considerable augmented reality SDK.

Many of their yearly announcements come from an annual event called insideAR that they host in their hometown of Munich, Germany.

This year there were many announcements. Everything from their new “Edge-Based Tracking” methodology to the new augmented reality browser they built for Google Glass called Junaio Mirage. The event took place in October, but I recently had a chance to speak with Metaio CEO Thomas Alt and discuss some of these announcements in greater detail along with his viewpoint on the general state of augmented reality today. You can read the interview below (or just skip to the bottom to see the highlight reel).

JD: At insideAR 2013, you were talking about “Always on, always augmented.” That was one of the mantras I heard come out of conference. What did you mean by that?

TA: “Always on, always augmented” means that you are essentially deploying augmented reality continuously. In our view this has three elements. One is hardware you are using (form factors). The second one is the underlying technology; the critical use cases to actually do augmented reality. And [thirdly] — coming back to the hardware — we’ve seen over the last 12 months everyone, all of the sudden, is looking into wearable computing as being a platform for augmented reality.

In my view there is something true about it. If you think about Augmented reality, which is essentially the capability of picking up, arbitrary, real objects in the environment and then furnishing information about them…wearable computing makes complete sense. So at insideAR we announced that we are shipping our new Metaio SDK 5.0 which is optimized on and for wearable computing. This means you can now deploy augmented reality on Vuzix m100 and on Google Glass.

JD: Vuzix has been around and creating wearables for a while. And even though they’ve been around longer, Google Glass, of course, gets much of the spotlight. But what’s next in wearables? Are there trends you are following?

TA: Yes, definitely. There are many other companies considering wearable computing as the next big thing. Really, to have high fidelity in head-worn displays, you really need binocular, see-through displays — this is coming. But also Smartwatches are something that we are seeing as very vital to the curve of augmented reality. This is because most Smartwatches now have displays and cameras. You can use your arm to look around you and see things that push information to the user. So it’s not only head-worn displays.

JD: Yeah, a Smartwatch might be a little less weird that holding a smartphone up to look at something.

TA: Yes, this is one of the reasons we think wearable computing (and Smartwatches in particular) are interesting. One inherent problem, obviously, is you are running on very small battery capacities.

JD: One question about that…do you have a way to do more things in the cloud for low battery consumption? For example, a Smartphone has a processor and reasonably long battery life so it can do a lot of the AR calculations on that device, itself. But do you have a scenario for say, a Smartwatch (that has low battery capacity and processing power), where the device and SDK can capture the data and maybe do the data crunching on the cloud and send back results? Am I imagining that? Would something like that work? Or is that not possible?

TA: Yes this is also a trend we are seeing. At Metaio, we call it Continuous Visual Search, which is the capability of offloading certain parts of the computer vision tasks to the cloud. And we have also demoed that at insideAR a system that detects 70,000 DVD covers on the cloud…so you need your wearable device only to initially capture your environment and then the computer vision processing is done in the cloud. This is not only research; we are actually shipping this capability already as part of our uniform SDK offering.

JD: Tell me about “Edge-Based Tracking”. How is this different from other AR technologies out there? Is this Metaio’s special sauce?

TA: The need for edge-based tracking came from customer requirements. If you look around you, most computer vision systems are working on textured surfaces — we call it point cloud-based approach or feature-based approach — and the challenge with that is that if you have reflective surfaces, like the painted body of a car, you can hardly detect tracking on that.

So the edge based tracking actually came from customer requirement on the VW Marta project we were working on where a service technician would stand in front of the car and need to track the outside of the car. Edge-based tracking is different in the sense that you are not using textured surfaces but rather edges of a 3D model. For anything with reflective surfaces, even things like glossy magazines, this approach is perfect.

JD: Yes, I suppose it could be very confusing to a computer to see a glare on the surface of anything from an automobile to a glossy magazine.

Ok next question. I remember about a year ago, I saw a SLAM (Simultaneous Localization and Mapping) tracking demo you did where you flew a remote control airplane at about 1000 feet and were tracking all the points of the farms and houses below. Are SLAM and Edge-based tracking of the same family of technologies?

TA: So SLAM (which is also part of the 5.0 SDK) is a feature-based approach. It works on points not on edges. It’s especially good for indoors location based systems. SLAM also works well when you don’t know your environment. For example, if you have a magazine, you know that environment — you know what magazine you are looking at and so an edge-based approach is best — but SLAM works in an unknown environment like in our IKEA catalog project. There we were using SLAM based tracking to learn the environment and put 3D objects in your living room.

JD: Ok, tell me about Junaio Mirage

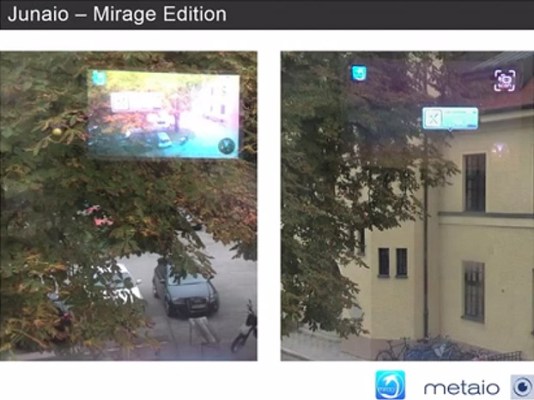

TA: It’s the first adaptation of an augmented reality browser for Google Glass and other wearable devices. Why did we choose to do it? Well, in Junaio we have a great developer ecosystem, which are developing everything from navigational apps to pathfinders to games and so forth and we basically wanted to leverage that content [that is already out there] for wearable computing.

So essentially with Junaio Mirage — which is really a prototype right now — when you put on Google Glass or other wearable devices you can see any of the content of Junaio in your real environment.

This will lead to this “always on, always augmented” theme again because content is the key. If you don’t have the content, what will the glass see?

JD: The only hard question I have for you is that Metaio were predicting at insideAR 2011 that by 2014, every smartphone would have augmented reality built in. How is that coming along?

TA: This year, in my keynote I tried to do an internal benchmark on the promises of insideAR 2011 and 2012 and as in any statistic you can’t really claim that you have 100% adoption of the technology. We’ve always said at Metaio that augmented reality is a new user interface — it’s not a self-standing technology.

However, what we have seen, especially in the last twelve months, is a massive growth in adoption of augmented reality. IKEA, for example, is a good reference. They are extremely satisfied. They have growing download numbers for their application, but their augmented reality is an addition to a standard offering they have — the IKEA catalog [itself].

But we, at Metaio, have seen a huge growth in the number of developers [creating content]. There are increasing numbers of people developing on the Metaio platform. We’ve also seen on the Metaio cloud (which is what powers these applications) a growth of 300%. So making a claim that augmented reality will be on every smartphone by 2014 is obviously yet to be seen but we think we are on a good path to actually get there.

JD: What’s more interesting to me than raw numbers is the trend. And from the stories I’ve read and the research I’ve done, what I see is that people are warming to the technology. For a long time, people seemed to think AR was strange; a fringe technology — e.g. ‘it’s something my kid would use but it’s too techy for me.’

But I feel like AR has really come into the mainstream. Users can really see the value and the use case. So regardless of whether the technology hits 100% adoption, I feel like the trend is that it is being accepted. People feel like it is a viable tool for trying to imagine things in their environment. And that means something.

TA: I would fully agree. It’s a new way of picking up information about real-world objects. I would assume that actual users who are using the IKEA catalog to place furniture in their environment have never heard of augmented reality and probably will never care about augmented reality as a technical term but they are using it — it’s just a simple idea of putting furniture you would want to buy in your living room prior to buying. This makes complete sense to consumers. And this is how I feel. Will there be an app called augmented reality on every smartphone in 2014? I doubt it. But will there be an augmented reality piece ingrained in other apps on every smartphone by 2014? I’m much more positive.

JD: Last question. What’s next for Metaio? What can we expect to see in the next year?

TA: So Metaio as a company, fundamentally, have never changed. What has changed is that we are seeing a broad adoption of augmented reality in very distinctive use cases. We have said at insideAR that we are seriously looking into things like face and body detection and tracking — for your smart device to be able to track your face and put virtual assets on top of it. This is something that is definitely on our roadmap. We will also bring augmented reality to indoor locations — the whole SLAM approach.

And then last but not least we will work more on what we call The Augmented City — using AR to detect arbitrary buildings around you and push information to that. For this specifically, we have done great advances in research and actually won best paper at ISMAR.

We have shown how you can actually process and initialize huge point clouds to do this outdoor augmented reality. And we will continue to develop these hardcore computer key technologies to enable these clustered use cases — to make AR viable on objects, on humans for indoor locations and outdoor locations.

JD: OK, I lied…one more question. Since you mention SLAM and indoor tracking, this idea is increasingly becoming a popular concept for retailers. And with the advent of Bluetooth LE beacon technology is there any way Metaio will be able to capitalize on that emerging indoor tracking trend to strengthen your augmented reality offering?

TA: Yes definitely. Beacons are a great way to initialize SLAM. Let me explain that quickly. With beacons you’re getting your last location within an indoor environment right? For example [the beacon is] saying “hey, there’s a guy standing in this spot [in the environment].

Now, what SLAM and computer vision can do on top of that is give you the exact position, location and orientation of the person. So you would initialize the person’s location from the beacon (or GPS or network location too for that matter) then use SLAM to navigate [more precisely] through the environment. We see this as a great trend coming out that can help us make the computer vision more stable.