Google Research just launched its Wikilinks corpus, a massive new data set for developers and researchers that could make it easier to add smart disambiguation and cross-referencing to their applications. The data could, for example, make it easier to find out if two web sites are talking about the same person or concept, Google says. In total, the corpus features 40 million disambiguated mentions found within 10 million web pages. This, Google notes, makes it “over 100 times bigger than the next largest corpus,” which features fewer than 100,000 mentions.

For Google, of course, disambiguation is something that is a core feature of the Knowledge Graph project, which allows you to tell Google whether you are looking for links related to the planet, car or chemical element when you search for ‘mercury,’ for example. It takes a large corpus like this one and the ability to understand what each web page is really about to make this happen.

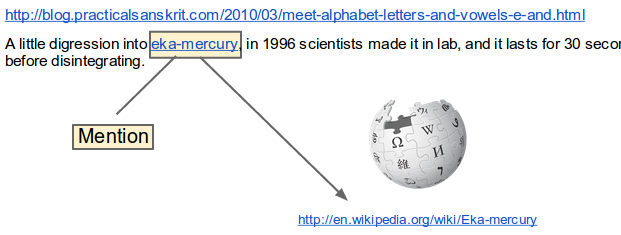

To construct this data set, Google looked at links to Wikipedia pages “where the anchor text of the link closely matches the title of the target Wikipedia page.” There is a high probability that this anchor text is a mention of the corresponding entity that’s the focus of the entity that’s discussed in the Wikipedia entry.

The 10 million annotated web pages, sadly, aren’t part of the corpus because of copyright issues, but the UMass Wikilinks project features all the necessary tools to create this data from scratch. The UMass team also published a paper that explains the process that was used to create this data set in more detail (PDF).

Last year, Google released a similar data set when it launched a database with over 7.5 million concepts and 175 million unique text strings, which is similar to what Google itself uses to suggest targeted keywords for advertisers. That set, too, was built by looking at Wikipedia articles to identify concepts and the anchor links that other websites used to link to them.