We’ve seen hacks for the Kinect from the very start, and even some that suggested one like this might be possible: a Kinect being moved around like a camera, recording the depth of everything it sees and building up a full-3D map of the room and every object in it. They call it KinectFusion, and it’s really quite fascinating to watch. I’ve re-hosted the video here, since the original is a bit cramped and not everyone wants to download the whole thing.

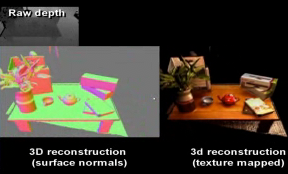

The position of the camera is constantly tracked by monitoring the depth of known objects in its view, and with that information known, the 3D data recorded can be given absolute measurements, producing a static map of the room. And it all happens in real time. Watch just the first demonstration and you can see the system “painting” a 3D model of the room as quickly as the researcher can move the Kinect around.

It tolerates change, as well: move objects in the scene and it’ll update the model. It “knows” whether an object is moving or the camera itself is. And by combining this new model with the normal capabilities of the Kinect, the room or object can be interacted with, as they demonstrate in the video by “throwing” gobs of little paintballs at things in real time, and picking up a real-life teapot that is also being mapped in 3D. Absolutely extraordinary that this is being done with an off-the-shelf device, a common PC, and some clever programming.

Among the applications for this suggested by the Microsoft Research team: “extending multi-touch interactions to arbitrary surfaces; advanced features for augmented reality; real-time physics simulations of the dynamic model; novel methods for segmentation and tracking of scanned objects” — and I’m sure you can think of a few yourself. Turning the Kinect into a user-controlled tool instead of a passive user-monitoring tool opens up a huge amount of possibilities, as other hacks have demonstrated as well.

KinectFusion is a team effort between Microsoft Research Cambridge, Imperial College London, Newcastle University, Lancaster University, and the University of Toronto. The project was demonstrated at SIGGRAPH yesterday, but this video really shows it off much better. Hopefully we’ll see a code release soon and people can play around with this amazing tool.

[via Reddit]