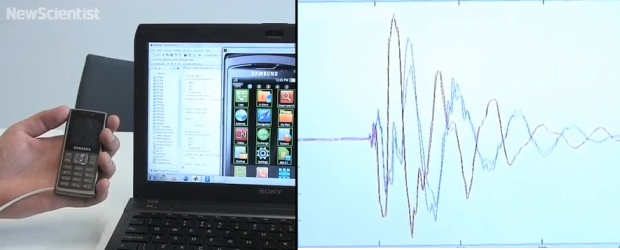

Forgive me for the inexact headline. Of course every device has touchable surfaces — how else would you handle the phone? — but not all respond to those touches. A company called Input Dynamics claims it can use any device’s microphone to pinpoint the location of touches on the device, by interpreting the noise of your finger hitting its surface. Sounds interesting, but can it possibly really work?

It’s called TouchDevice, and while it sounds cool, the troubles here are several. First, I question the precision of such a technology. Can they distinguish between touches a millimeter apart? A centimeter apart? It makes a real difference to how useful it can be.

Also, touchscreens aren’t just about providing buttons to be pressed. The benefit of touchscreens is that you can see what you’re touching. Direct interaction with UI elements is important for the UIs of smartphones today.

Third, apparently you have to make a certain kind of tap on the phone, with a fingernail or other hard object. Isn’t there a huge amount of variance in the acoustic properties of different people tapping, at different strengths, with different objects?

I can see this as an add-on for existing interfaces, like adding a way of doing contextual actions on a tablet — normal touch to do normal things, hard tap to raise a menu or hide a window, that sort of thing. The main benefit may actually be for existing phones without touchscreens, which may be able to be retrofitted with a TouchDevice-enabled OS. I’d have to try it to see if that’s something really worthwhile, but it’s certainly a cool idea.

[via Dvice]