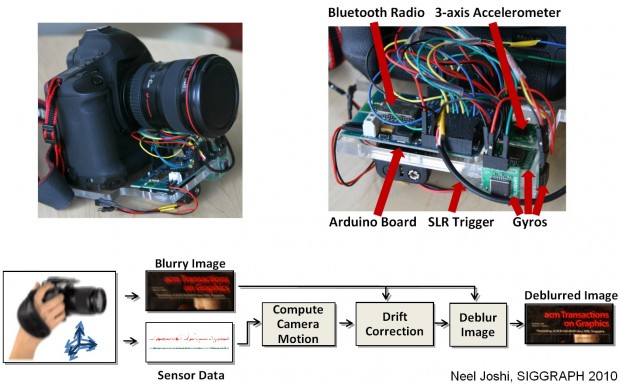

This is a cool idea, though it’s obviously still in the prototype phase right now. This Microsoft Research project took a DSLR (a Canon 1D mk III if you must know) and modified it with some easily-acquired gyros and accelerometers. These are keyed into the camera’s shutter mechanism so they can record the camera’s slight movements during an exposure. These movements are then used as fuel for a deblurring algorithm that actually seems to do a pretty good job.

They say this is the first method that uses a triple-gyro, triple-axis accelerometer setup to capture camera movement. It seems you can get much better results when you solve for roll as well as simple image translation.

Before (click for large):

After:

The results are pretty impressive, but obviously not perfect — just better than what’s out there. You can see artifacts in high-contrast areas and there’s a curious optical effect on the whole thing, but the fact is it’s genuinely sharper and more clear than the source image.

Their mod is pretty bulky right now, since it’s just a lab version, but I’m guessing something like this could be integrated with a camera fairly easily. I mean, for image stabilization these days, everything is focused on moving the sensor or adjusting the image in-lens; tracking camera movements minutely (it could be included in EXIF) and passing that information to an optional deblur filter could be helpful.

My question is this: why do they need a 1D with L glass to test this?