As you may have noticed, Twitter has had some reliability issues over the past few months. Part of this was related to the World Cup, part of it is because they just continue to grow at a fast pace — 300,000 new accounts are created a day now. It has gotten to the point where Twitter needs their own warehouse for tweet storage. So they’re building one, in Salt Lake City.

As you may have noticed, Twitter has had some reliability issues over the past few months. Part of this was related to the World Cup, part of it is because they just continue to grow at a fast pace — 300,000 new accounts are created a day now. It has gotten to the point where Twitter needs their own warehouse for tweet storage. So they’re building one, in Salt Lake City.

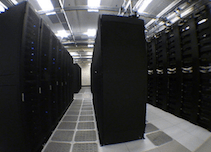

While it undoubtedly won’t be as large as Apple’s forthcoming billion-dollar data center in North Carolina, Twitter says they have been working on a “custom-built” one that will be opening later this year.

“Having dedicated data centers will give us more capacity to accommodate this growth in users and activity on Twitter,” Twitter’s Jean-Paul Cozzatti writes on the Engineering Blog today.

“Twitter will have full control over network and systems configuration, with a much larger footprint in a building designed specifically around our unique power and cooling needs. The data center will house a mixed-vendor environment for servers running open source OS and applications,” he continues.

Up until now, Twitter was using data centers built by NTT America in the Bay Area. “We’ll continue to work with NTT America to operate our current footprint. This is our first custom-built data center,” a Twitter representative tells us.

This move follows Facebook announcing its own data center back in January. That center is in Oregon, where many other companies have data centers as well — including Amazon and Google. The reason? Cheap power, a good climate (read: cooler), and tax incentives for companies to build these centers there. I’ve asked Twitter if similar reasons are behind the decision to build in Utah.

Twitter appears to be on a massive PR offensive to explain to users why they keep going down (or keep shutting off certain features — like the ability to sign-up). Twitter has a post on its main blog about this, and another post by Cozzatti that goes into the issues in detail. The basic gist: on Monday, one of Twitter main user databases got stuck in a query and the system got locked down. They had to force-restart the database server — a process which took over 12 hours. Now perhaps you see why they need more control over their systems.

“We frequently compare the tasks of scaling, maintaining, and tweaking Twitter to building a rocket in mid-flight,” Cozzatti writes.

[photo: flickr/cellanr]