Whether you buy into the hype or not, it’s plain fact that 3D is everywhere these days. From movies and games to laptops and handhelds, pretty much every screen in the house is going to be 3D-capable in a year or so, even if you opt not to display any 3D content on it. Those of you who choose that path may stop reading now, and come back a little later when you change your mind. Because if you have kids or enjoy movies and games, there will be a point where you’re convinced, perhaps by a single standout piece of media, that 3D is worth it at least some of the time.

But 3D isn’t as easy to get used to as, say, getting a surround-sound system or moving from 4:3 to widescreen. Why is that? Well, it’s complicated, but worth taking the time to understand. Moreover, like any other new technology, 3D is not without its potential risks, and of course studies will have to be done to determine the long-term effects of usage, if any. For now, though, it must be sufficient to inform yourself of the principles behind it and make your own decision.

Let’s just have a primer in case you’re not familiar with how 3D works in general. There are a few major points worth keeping in mind. We’ll start with the basics. How do you see in 3D to begin with? Here’s a crash course on your visual system.

How depth is determined in your visual system

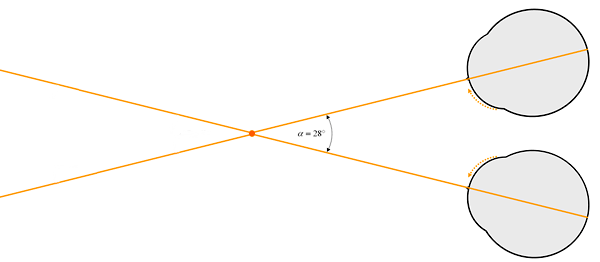

Even if it feels silly, just indulge me here: close just your right eye. Now just your left. Now your right. It’s like in Wayne’s World: camera one, camera two. You must have noticed that things change position a bit. This, of course, is because your eyes are a few inches apart; this is called the “interocular distance” and it varies from person to person. Note also that when you look at something close, objects appear in double in the background. Look at the corner of the screen. See how the chair or window back there is doubled? It’s because you’re actually rotating your eyes so they both point directly at what you’re focusing on. This is called “convergence,” and it creates a sort of X, the center of the X being what’s being focused on. You’ve probably seen a diagram like this one before:

So the objects at the center of the X are aligned at the same points on your respective retinas, but because of the interocular, that means that things in front and behind of that X are going to hit different points on those retinas — resulting in a double image. You can see through the double image because what’s blocked for one eye is seen by the other, though from a slightly different point of view. Experimenting with this always gave me a sort of childish joy: once you start learning the actual optics and wiring of the visual system (as I did when I was studying it), you lose all compunction about playing with it and viewing your own mind’s mechanics in action. Optical illusions are great for this, too.

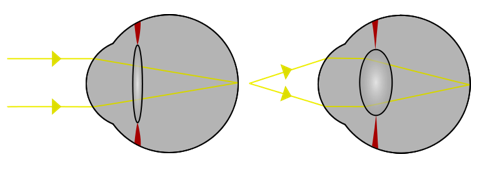

Next we have focus. Your eye focuses differently from most cameras, but the end result is similar. Try holding your finger out at arm’s length and focusing on it, then on something distant that is directly behind it. Obviously the focus changes, and you may have noticed that while the distant object was blurry while you looked at your finger, your finger (in double) was in focus while you looked at the distant object. That has to do with the optic qualities of your eye, which I won’t go into here, but it’s still important. More important, however, is this: try it again, and this time pay attention to the feeling in your eye. Feel that? Sort of like something’s moving but you can’t tell exactly what? Well, your eyes are rotating a bit, but only a few degrees, in order to converge further out, but more importantly, you’re feeling your the muscles of your eye actually crush or stretch out the lens of the eye, changing the path and internal focal plane of the light entering your eye. Farsightedness and nearsightedness occur at this stage, when either the lens or the eyeball itself is misshaped, resulting in focus being skewed or difficult to resolve one way or another.

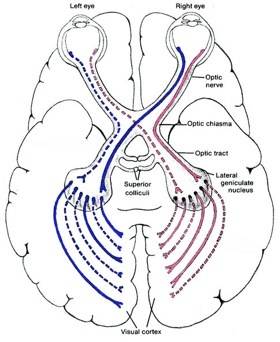

Once the two images have been presented to your retinas, they pass back through the optic nerve to various visual systems, where an incredibly robust real-time analysis of the raw data is performed by several areas of the brain at once. Some areas look for straight lines, some for motion, some perform shortcut operations based on experience and tell you that yes indeed, the person did go behind that wall, they did not disappear into the wall, and that sort of thing. Eventually (within perhaps 50 milliseconds) all this information filters up into your consciousness and you are aware of color, depth, movement, patterns, and distinct objects within your field of view, informed mainly by the differences between the images hitting each of your retinas. It’s important to note that vision is a learned process, and these areas in your visual cortex are “programmed” by experience as much as by anatomy and, for lack of a better term, instinct.

Once the two images have been presented to your retinas, they pass back through the optic nerve to various visual systems, where an incredibly robust real-time analysis of the raw data is performed by several areas of the brain at once. Some areas look for straight lines, some for motion, some perform shortcut operations based on experience and tell you that yes indeed, the person did go behind that wall, they did not disappear into the wall, and that sort of thing. Eventually (within perhaps 50 milliseconds) all this information filters up into your consciousness and you are aware of color, depth, movement, patterns, and distinct objects within your field of view, informed mainly by the differences between the images hitting each of your retinas. It’s important to note that vision is a learned process, and these areas in your visual cortex are “programmed” by experience as much as by anatomy and, for lack of a better term, instinct.

Why do I go into all this detail? Well, because I think it’s interesting. And also because if you want to understand 3D display technology, it’s probably a good idea to understand your native 3D acquisition tools.

How depth is created in 3D displays

There’s a simple explanation here and a complicated explanation. The simple one first: 3D displays create the illusion of depth by presenting a different image to each eye. That’s it. And that’s something that all 3D displays have in common, no matter what. In a fit of uncommon camaraderie among media and electronics companies, standards were even developed that encode a 3D stream similarly to normal stream, except with totally separate left and right eye images baked right in. There are variations, of course, but it’s a surprisingly practical approach they agreed on.

But how best to display it? Everyone differs in their opinions. Basically you have three fundamental techniques (and a few outdated or simply unused ones I’ll mention briefly) of sending the “right eye” image to the right eye and the “left eye” image to the left eye (RIP Lisa Lopes). If you imagine each image to be tailored to its respective eye (it’s hard to fake), a perfect 3D display would show this:

In practice that doesn’t really happen. Let’s take a look at the techniques used to approximate it.

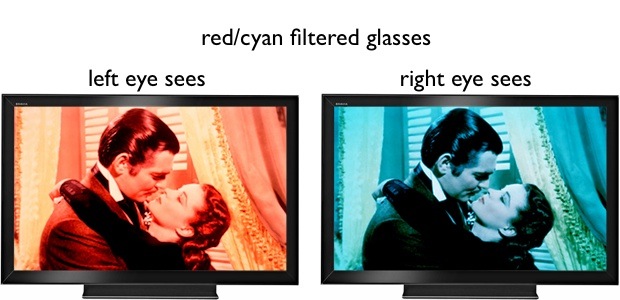

Filtered lenses

The original. The red-blue, red-green, or magenta-cyan (or “anaglyph,” the coolest word in this story after “parallax barrier”) glasses that came to symbolize 3D split a black-and-white (and later, color) image into two complementary components. I won’t get deep into the mechanics of it (Wikipedia has an excellent entry) but basically it creates an image where part goes to one eye and part to another, and part to both — this last part will be the screen’s “normal plane” where your eyes are to focus, and hence receive similar input. Troubles are mainly of the color perception variety; it’s extremely hard to balance things so that your brain takes the broken-up colors and assembles them correctly. However, anaglyph is still in use and still relevant — I just watched Toy Story 3 using green-magenta and it looked great. A newer version (by Infitec) uses advanced filters to split each color into two components, sending half the red spectrum to one eye and one to the other,

The original. The red-blue, red-green, or magenta-cyan (or “anaglyph,” the coolest word in this story after “parallax barrier”) glasses that came to symbolize 3D split a black-and-white (and later, color) image into two complementary components. I won’t get deep into the mechanics of it (Wikipedia has an excellent entry) but basically it creates an image where part goes to one eye and part to another, and part to both — this last part will be the screen’s “normal plane” where your eyes are to focus, and hence receive similar input. Troubles are mainly of the color perception variety; it’s extremely hard to balance things so that your brain takes the broken-up colors and assembles them correctly. However, anaglyph is still in use and still relevant — I just watched Toy Story 3 using green-magenta and it looked great. A newer version (by Infitec) uses advanced filters to split each color into two components, sending half the red spectrum to one eye and one to the other, but these haven’t caught on which is part of the Dolby3D system (and which somehow I’ve managed to avoid entirely and think rare).

The most modern version of filtered lenses (bottom illustration) uses polarization — originally linear, now circular polarization (RealD) is used, since it allows you to tilt your head without affecting the image. The objection to this tech is that the brightness of the image is essentially halved. Circular polarization in general is really cool, by the way. I submit that a particle may also have a wave-based quantum polarization, fading in and out of existence. But that is neither here nor there. Literally! Oh, I crack myself up.

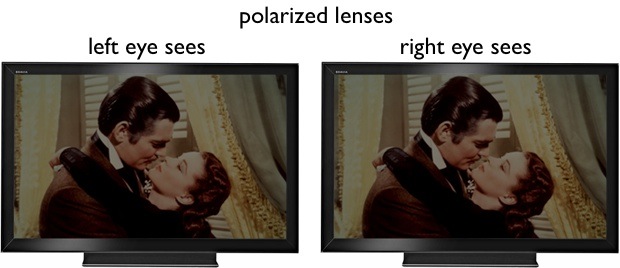

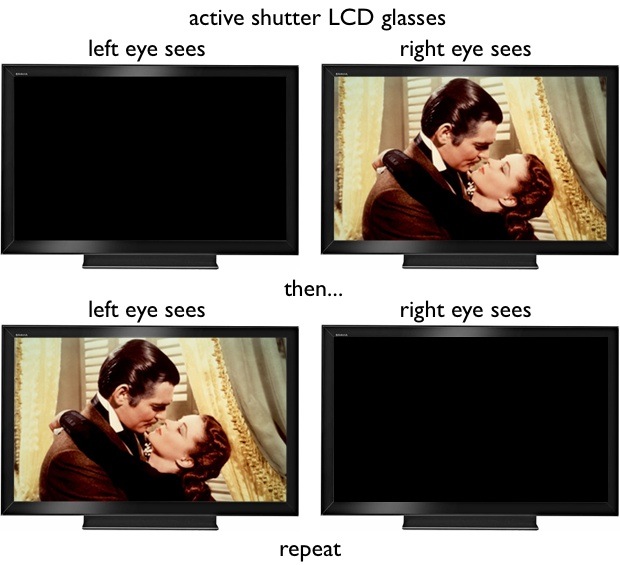

Active shutter glasses

This is the current method of choice for most 3D TV companies. The media is displayed at a high framerate, and the glasses rapidly switch between black and clear using a pair of low-latency transparent LCD screens. In this way, one eye sees nothing (for as little as a hundredth of a second or so) while the other sees its “correct” image, and a few microseconds later, the situation is reversed: the opposite eye’s image is displayed and the LCDs have switched. The benefit is that each eye is getting the “full” image whenever it’s getting anything (unless they’re cheating and doing it via interlaced field switching).

This is the current method of choice for most 3D TV companies. The media is displayed at a high framerate, and the glasses rapidly switch between black and clear using a pair of low-latency transparent LCD screens. In this way, one eye sees nothing (for as little as a hundredth of a second or so) while the other sees its “correct” image, and a few microseconds later, the situation is reversed: the opposite eye’s image is displayed and the LCDs have switched. The benefit is that each eye is getting the “full” image whenever it’s getting anything (unless they’re cheating and doing it via interlaced field switching).

There are a number of objections to this technology:

- The glasses themselves vary in performance. The technology is being improved, and last year’s glasses are outperformed by this year’s glasses… and likely in a few months they will be out of date. It’s not a bad thing that the tech is improving, but considering the next few point against it, it’s bad news to implement a technology in flux.

- High cost. A single pair of these glasses may cost upwards of $100. The cost of adopting these as an individual is high enough, but for a theater which must buy thousands and maintain them, while facing the constant risk of theft, it’s impractical.

- Position and tilt can affect the image. I’m not sure about this one, but it seems that the LCD shutters refresh from the top down, like your monitor. It’s not done all at once, technically speaking. This can be predicted for or ignored when the head is straight, but when there is tilt, you can get bleed of certain portions of the other eye’s image, or a prismatic effect from the LCDs not being aligned correctly with the screen. There can also be interference from the refresh pattern of the LCD. Plasmas reduce this.

- Power is required. LCDs don’t run on faith. The batteries in these things need charging, not particularly frequently, but infinitely more so than passive glasses. Furthermore, the machinery and power infrastructure means they can be bulky and heavy, though this is improving.

- A “frequency arbiter” is often required. Until some serious standards are established, it’s unlikely that your glasses, Blu-ray player or set-top box, and TV will all be speaking exactly the same language. And since absolute precision is necessary for the glasses to work correctly (the error must be kept down to fractions of a microsecond), and tiny variations may occur somewhere along the line, a synchronizing device is often used to coordinate the signals from the other components of the display. These increase the cost of the system and are one more thing to break or replace.

- The flashing LCDs have been linked to epileptic seizures, among other things. I’ll go into this further below in “dangers,” but for now it’s enough to know that the risk exists.

As you can see, it’s quite a list, though some of these flaws are being remedied. One wonders why so many companies have gotten on board with such a thing. Well, it offers much more opportunity for making money, for one thing, and they don’t have to modify their screens in any way other than increasing the refresh rate, for another —saving them precious R&D money. My personal opinion is this technology will remain on top until someone’s skunk works research team produces a viable alternative in the form of circular-polarized TV display filters that don’t affect the image too much.

Autostereoscopic display

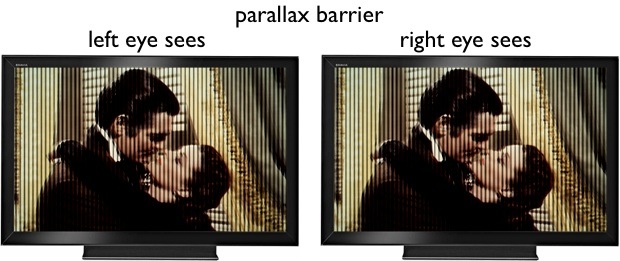

These have been around for a while, at various levels of sophistication, and are now in the spotlight as the 3D method used by the Nintendo 3DS. You probably saw something of the lenticular lens method (a saw-tooth prism that directs light in varying direction) in “holographic” display in the 90s on a fancy CD jewel case covers (I remember it from Tool’s Aenima (yeah yeah)). The principle is that by some method or another, one pixel or group of pixels has its light directed to one eye, and another group to the other. This can be accomplished in a number of ways, but nowadays it is most likely to be a parallax barrier: a series of slits in the display, precisely placed to allow light from every other line of pixels to go one way or the other. Even lines of pixels go right, and odd lines go left, for instance. This enables the image to be split without the use of glasses — a benefit not to be underestimated.

These have been around for a while, at various levels of sophistication, and are now in the spotlight as the 3D method used by the Nintendo 3DS. You probably saw something of the lenticular lens method (a saw-tooth prism that directs light in varying direction) in “holographic” display in the 90s on a fancy CD jewel case covers (I remember it from Tool’s Aenima (yeah yeah)). The principle is that by some method or another, one pixel or group of pixels has its light directed to one eye, and another group to the other. This can be accomplished in a number of ways, but nowadays it is most likely to be a parallax barrier: a series of slits in the display, precisely placed to allow light from every other line of pixels to go one way or the other. Even lines of pixels go right, and odd lines go left, for instance. This enables the image to be split without the use of glasses — a benefit not to be underestimated.

As you might expect, there are issues with this method as well.

- There’s a relatively small “sweet spot.” Because the placement of the slits (and therefore the viewable angle of the affected lines of pixels) is static, you must have your eyes in a certain place in order to perceive the effect. Too close or too far away and light begins to leak in from the other set of pixels, or the 3D illusion is destroyed otherwise.

- Effective resolution and brightness are halved. Because half of the lines are going one way and half the other, each eye receives half the information that the screen is capable of putting out. This results in reduced brightness and resolution. The 3DS’s screen is 800×240, but the effective resolution is 400×240 (giving it a weird aspect ratio somewhere between 16:9 and 16:10, incidentally). If you want to display 1080p content using a parallax barrier, you need a 4K display.

- It requires modification of existing screens. Although I can imagine a parallax barrier filter for a TV, it’s never going to happen. The technology must be tightly integrated with the display, which increases cost. It’s also possible that the parallax grill might be variable on large TVs with wide pixel pitch.

Other ways exist of showing 3D on single screens without glasses: the use of two complementary displays (in “VR goggles” or the like), for instance, but these methods of showing two images to the visual system generally make too many compromises in cost or image quality. For you, there are mainly the ways shown above. I make no secret of my preference for circular-polarized glasses: they’re cheap and effective, only held back by the brightness and lighting method of whatever screen you’re using. I think they’ll win out in the end, but as we move on to the “dangers,” please to remember that the methods mentioned are more or less equally exposed to these persistent problems.

There is also an ongoing conflict among 3D filmmakers concerning the use of convergence versus parallel. This is a technical consideration not of general interest, but in the interest of completeness I should say that there are points on both sides. Filming is far, far simpler with cameras aligned in parallel, but some insist that convergence is necessary to create the more compelling 3D effect. It’s far from settled, and I expect to have a separate post on that in the near future.

Something not in conflict is the quality of films created using 2D-to-3D conversion. These Franken-films are a production disaster and the coarseness of their depth cues would give anyone a headache. Avoid at all costs.

One last note: stereoscopic technologies usually assume good vision to some degree in viewers. A majority of people have some defect in their vision, however, most often nearsightedness in one eye or the other, or minor astigmatism. This can be difficult to allow for, resulting in glasses breaking the 3D effect, and of course the necessity of wearing 3D glasses over one’s glasses is a deal-breaker for many. Expect this to be catered to in the next generation of 3D displays. They have to walk before they can run.

Why and to whom 3D displays are dangerous

A couple months ago, Samsung conscientiously issued a public warning concerning its 3D displays, and by implication those of the competition. This warning probably exists in the documentation of every 3D display technology out there in one way or another, though some have it worse than others. It’s also probably printed on the back of your ticket whenever you go see a 3D movie, though I suspect that theaters aren’t as assiduous as they could be in screening out preggers and babies from their audiences. View at your own risk, I suppose. So why the warning?

Well, in Samsung’s case it’s specific to active-shutter glasses. I mentioned above that these glasses can produce seizures and other effects. What happens is this: having each eye being completely blocked out thirty or sixty times per second is the equivalent of having a high-frequency strobe going off in your face. You’re probably aware of the danger this presents to epileptics and others: seizures, nausea, and fatigue are not uncommon. Furthermore, though this hasn’t been adequately investigated to my knowledge, it seems to me that the constant strobing might result in fatigue of your iris and lens muscles, which are constantly receiving conflicting information. That’s getting better, however: the precision is increasing and duration of blackout decreasing, which means your unconscious is less likely to “see” the blackout.

But there are objections to 3D that apply whether you’re using shutter glasses or not (if you’d rather have Bill Nye explain this to you, click here). The issue is simply this: you’re tricking your eyes and your mind into thinking there is depth where there isn’t. But it begins to look less like a trick and more like a serious deception if we add two things into consideration: one, that perceiving depth is an active process that involves muscles and movement, and two, vision is a learned skill.

Think back to when you were focusing on your finger, and then on the distant object. Your eye “knows” that to focus on a more distant object, it has to perform a certain transformation on the lens in order to bring that object into focus. When you see an object in a movie, your eye immediately tries to correct for that object’s distance by deforming your lenses— but nothing happens! “Whaaat?” your visual system thinks, “That car should be coming into focus!” But it doesn’t and it won’t for the foreseeable future, and meanwhile every time the focus changes, or the shot changes, or the camera moves, or the characters move, your eye is attempting to refocus, to change its shape. This creates fatigue in the muscles and confusion in the brain.

![]()

When you shift your attention and gaze to another (out of focus) object, your eyes also naturally change their point of convergence. This occurs when you’re watching 3D movies as well; you can even see with your naked eye the bones of the 3D effect, in that objects behind or in front of the plane of focus will be out of phase with each other (they’ll appear blurry or in double; the farther from what the plane of focus, the more so). When your eyes change convergence, they naturally want to change focus, since in a majority of cases, changing the point you’re looking at means changing the shape of your lens. Again, though, your eye and brain are tricked, and the act of focusing is not only unnecessary but harmful to correct perception of the 3D image. So your eyes reconverge but the focus doesn’t change — an unnatural occurrence, though one that can be minimized by careful manipulation of the cinematography and maintaining control over the audience’s gaze.

Another effect is parallax, which is the way objects move relative to each other when you move your head. The dissonance created depends on the distance from the screen, since objects don’t move much when you move your head a bit if they’re 40 feet away. But no matter what, when your eyes move, your brain expects the image to move if it’s perceiving depth. [thanks to the commenters who corrected me on these points]

There is another result which at this moment is only theoretical, but is still scary for all that. When you’re born, your brain is only set up for vision the way an empty hard drive is set up to store data. The infrastructure is there, but you need to fill it. Babies are born into “one great blooming, buzzing confusion” as William James put it, and slowly learn to perceive and categorize objects, patterns, tendencies, and so on. Certain portions of the visual system are set up from the start to work toward certain ends, but it’s safe to say that if you moved early on to a world with no horizontal lines, the horizontal-line-detecting portion of your brain would be replaced by something more useful. You have a great deal of plasticity in the sensory portions of your brain, though arguably not as much as in less specialized cortex.

Anyway, the point is that the visual lessons you learn can be unlearned. Yeah, it sounds kind of silly at first, but there are already reports of people having depth perception trouble after watching a 3D movie at home or in the theater. 3D gaming may even exacerbate the problem, since you’re not simply viewing a virtual 3D space but interacting with it. It’s conceivable that your visual system could become partly rewired in order to better understand how a 3D visual world can exist on a 2D physical plane.

I don’t want to sound alarmist, though: after all, who hasn’t felt residual effects from this or that experience for shortly after? One could argue that the transient disorientation following a 3D media viewing is as harmless as the common motion aftereffect, the Tetris effect, or any other illusion resulting from continual exposure to an abnormal stimulus. Experiments have been performed in which subjects wore vision-flipping prism glasses for an extended period (up to a week, I believe), and when the glasses were removed, their perceptions to returned to normal after some hours (either a surprisingly long or reassuringly short period depending on your perspective).

Consider, though, that your vision returns to normal after a period of exposure only because it has a “normal” to which it can return. The danger isn’t so much to those of us with established visual systems, but to those who are still learning. I haven’t read any of the literature, and I suspect there is much discussion of when the visual system reaches “maturity,” but I think it’s safe to say that a toddler of 4 or 5 is approaching it but not quite there. Here danger lies.

If a child’s visual system is taught with a significant portion of its input being a virtual 3D environment on a 2D physical plane, the brain will form with such a system being “legitimate,” possibly to the detriment of normal vision. And this is without reckoning the potential damage from the lack of visual variety such a child would experience. I’m no naysayer when it comes to digital means of entertainment for kids, but playing outside and in the real world fulfills a necessary part of our physical and mental development. A child exposed to excessive amounts of 3D media may not only develop incompletely due to lack of stimuli, but may develop incorrectly due to the presence of misleading stimuli.

It’s a serious consideration, but to be honest, not one that seems to me to be a great risk at the moment. The proportion of the child’s time dedicated to 3D media would have to be huge, and would probably indicate more pressing problems than choice of entertainment. It may turn out that all this worrying was for nothing, but it can’t hurt to be educated about it. And it’s worth mentioning as well that a “native” understanding (i.e. dedicated brain space) of 3D/2D displays is not necessarily a bad thing. I myself have (if I may say so) rather good hand-eye coordination and reflexes partially attributable to playing Ninja Gaiden II and Mario Kart when I was younger. Even so, it’s worth being aware of what is possible and why.

What you can do about it

Unfortunately, there’s not a hell of a lot you can do to reduce your risk. The problems are inherent in our own makeup and the fact that 3D displays create only the illusion of depth. What you can do is control your exposure.

- Take a break. I know it’s hard to stop a movie right in the middle, but think of what you’ve read here and how hard it is on your eyes to do what you’re doing. There is nothing to indicate that occasional and limited use of 3D has any kind of long-term ill effects. All the same, take the same precautions you might use in watching a long movie or doing lots of work on your computer. Stand up, walk around, and interact with things in the real world. Give your brain a break from being tricked. If you feel that you are beginning to lose touch with the real world (this actually happens), stop for at least half an hour. Some people are more susceptible to sickness and after effects than others. Know your own limits and the limits of others.

- Limit kids’ 3D time. Again, there is little to suggest that moderate use, even by kids, will make for any serious problems. And I’m not going to fabricate numbers here for reasonable amounts of time. But early childhood is, of course, an incredibly important formative period for basic physical interaction and establishing sensory norms. Use your discretion and remember that viewing 3D on a 2D surface is far more unnatural to a visual system (young or old) than plain 2D images, or even 2D representations of 3D environments, which are very different.

- Don’t buy active shutter-based displays. This may necessitate the next tip. You’ll save money and avoid the potential problems inherent in the system. If you absolutely must buy one, do your research beforehand and don’t buy cheap. Slow LCDs and shoddy synchronization are bad for both you and the image.

- Opt out until 3D gets better. All these technologies are being improved at impressive rates, since all the electronics companies are banking on them as the next big money-maker. Next year will bring better shutter glasses, brighter screens, and possibly affordable home polarization. As with any other new technology, the longer you wait, the better deal you’ll get down the line. Early adopters are paying with more than cash (though they’re paying enough of that); they’re paying with the headaches of complicated setups, hardware incompatibility, and so on, in addition to the real-life headaches that 3D seems to bring to nearly everyone at this point. If you really want to see something in 3D, you can always go to the theater.

There you have it. 3D is a promising and powerful tool, and I look forward to watching movies and playing games with it — once its initial growing pains are over with. Roger Ebert was impatient enough to condemn the technology at this early stage, and as you have read, there are plenty of reasons for him to do so. But I have faith that 3D can be applied tastefully and judiciously by those creating the media, and viewed with moderation and care by those of us who choose to consume it.