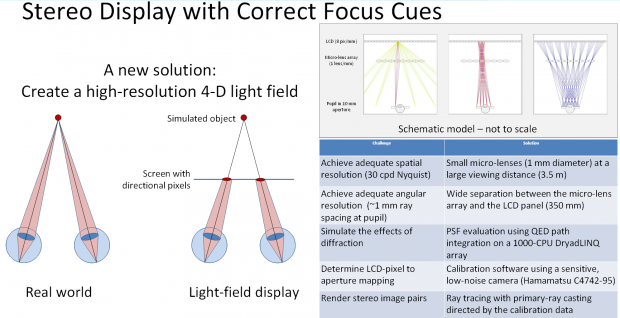

One of the issues people have with 3D displays, or more precisely, rather one of the issues people’s brains have with 3D displays, is that your eyes remain focused on the same plane (the screen) while the actual visual cues change and make you think you should be refocusing. It’s such a fundamental response that it can’t really be avoided, only accommodated. One project attempting to do this is detailed in this slide from Microsoft Research’s TechFair going on right now.

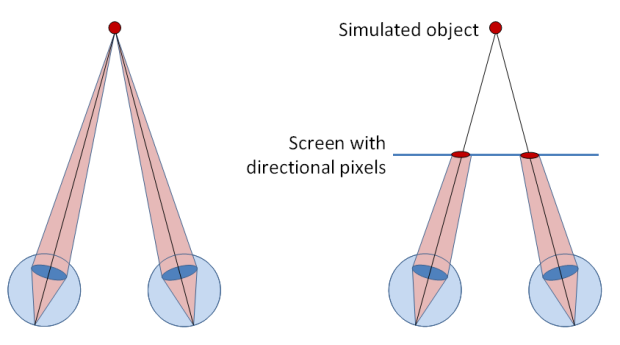

The idea is that instead of having the eyes looking at the screen and attempting to focus nearer or further from it, a microlens array is interposed, which refracts the light in such a way that the eye would be able to focus on where the displayed object would actually be. Here’s the full slide:

The idea is cool, but the technology to make this happen would be monstrously complicated. Current 3D displays require only a left and right eye image, and display that in sequence, or optically separated, or whatever. But this display method would need more information: virtual distance, relative object size, and so on, unless I’m mistaken and it can derive this information from the image.

It’s all just research right now anyway, far from a commercial application if it’s even viable, but I like hearing what people are working on in this area. Like I said regarding Ebert’s objections to 3D, a lot of naysayers will probably be eating their words when the technology improves over the next few years.