Yesterday I visited Microsoft HQ on a surprisingly warm winter day in Redmond, Washington. This was an all day briefing with Craig Mundie, Microsoft’s Chief Research and Strategy Officer, and his team on various Microsoft projects.

Yesterday I visited Microsoft HQ on a surprisingly warm winter day in Redmond, Washington. This was an all day briefing with Craig Mundie, Microsoft’s Chief Research and Strategy Officer, and his team on various Microsoft projects.

We spent part of the morning getting a preview of some of the projects on display at the upcoming TechFest – an employee event where Microsoft researchers share some of the things they’re working on.

Some of these projects eventually become products or features in one form or another. Others don’t. It’s sort of like a high school science fair, if you can imagine the students a decade or two older than that and with nearly unlimited funds to play with.

I took brief videos of all of the projects on display. The ones with obvious product potential in the near future are Project Gustav and the translating telephone, which are the first two below. But all are fascinating.

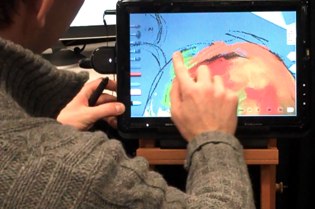

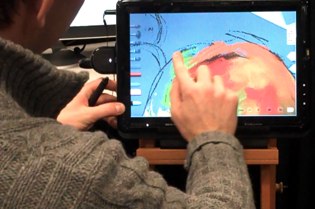

Immersive Digital Painting (Naga Govindaraju)

A realistic painting-system prototype that enables artists to become immersed in the digital painting experience. Project Gustav achieves a high level of interactivity and realism by leveraging the computing power of modern GPUs, taking full advantage of multitouch and tablet input technology and our novel, natural media-modeling and brush-simulation algorithms. The prototype provides realistic models for pastel and oil media, with more to come.

The Translating! Telephone (Kit Thambiratnam, Frank Seide)

Demonstration of a system for live speech-to-text and speech-to-speech translation of telephone calls. The goal in the telephone-call scenario is to provide an aid for cross-language communication in the event that no other means of communication exists. The system makes extensive use of speaker-adaptation technologies to achieve reasonable, real-time speech-to-text transcription accuracy. This is then translated live using machine translation to provide speech-to-text translation and further fed into a text-to-speech system to realize speech-to-speech translation. The speech-to-text transcript and the translated transcript are shown to the users to enable validation of their intentions.

Mobile Surface (Chunhui Zhang):

An interaction system for mobile computing. The goal is to bring the Microsoft Surface experience to mobile scenarios and to enable 3-D interaction with mobile devices. Demonstration of how to transform any surface, such as a coffee table or a piece of paper, into a Mobile Surface by using a mobile device and a camera-projector system. Also shows 3-D object imaging, augmented reality, and multiple-layer 3-D information visualization. Developed a system with the camera-projector component to scan 3-D objects in real time while doing normal projection. To visualize, 3-D data can be projected onto a surface formed by a piece of paper while maintaining the original scale as if it were printed on that paper, and a user can interact with the projected content with a hand. Mobile Surface enables you to interact with digital contents and information around you from anywhere.

Natural User Interfaces with Physiological Sensing (Desney Tan and Dan Morris):

Microsoft’s work on interaction modalities, or natural user interfaces. While these modalities rely on sensors and devices situated in the environment, there is a need for new modalities that enhance the mobile experience. We take advantage of sensing technologies that enable us to decode the signals generated by the body. We will demo muscle-computer interfaces, electromyography-based armbands that sense muscular activation directly to infer finger gestures on surfaces and in free space, and bio-acoustic interfaces, mechanical sensors on the body that enable us to turn the entire body into a tap-based input device.

Inside the Cloud: New Cloud-Computing Interaction (Chunhui Zhang):

With cloud computing, users can access their personal data anywhere and anytime. Cloud computing also will enable new forms of data to be provided for users, with applications ranging from Web data mining to social networks. But cloud computing necessitates new interaction metaphors and input-output technology. The cloud mouse is one such technology. With six degrees of freedom and with tactile feedback, the cloud mouse will enable users to orchestrate, interact with, and engage with their data as if they were inside the cloud.