Devices straight out of science fiction are entering our lives with regularity nowadays. And although the wonder is gone from the continual shrinking of our phones and media players and the growth of our displays, one field that retains its interest is that of cybernetics. Apart from the name just sounding cool, the idea of replacing or augmenting our mortal frame with machines is too compelling not to pay attention to.

But like all sciences, it is a slow march forwards, widely punctuated with visible peaks, newsworthy items like the Eyeborg guy or a successful cybernetic arm. Even these are transitional states, and despite the fanfare they attract, are each simply one more step along the road.

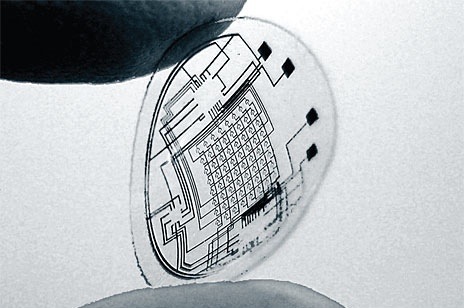

Here we have an excellent article on the state of vision augmentation technology, and though it conjures images of going to the local Fry’s and picking out a custom eye overlay, the reality is that we are in the very early, yet very promising, first stages of a major technology. We are privileged to witness it as it grows from thesis to lab to workshop to treatment, but we mustn’t be impatient. It will be years before something like this will be available, but the fact that we can even see it on the horizon is reason enough to be thankful.

Having studied the eye and visual system a bit in college, I have to say that I find the contact display idea to be inadequate for its own purposes. Though attempting to work out a non-invasive solution is commendable, it introduces as many problems as it solves. The power and transparency issues become worse as the technology becomes better, and I am skeptical of the ability of the contact to provide enough light to be salient, or for the display to be accurate enough to be useful (or even in focus at all — they suggest a microlens array, good luck with that).

I humbly suggest a micro-projector embedded in the eye; yes, it sounds fanciful, but be honest, it’s no more fanciful than a high-res transparent display using wireless power. With an internal display you can also internalize power, control and storage, and optical qualities are adjustable on the fly (though they shouldn’t need to be changed much after an initial calibration). Plus it’d be much easier to do a display with a usable resolution. Of course, it would take surgery and there’s the risk of rejection, but I think it’s the only option for a truly functional augmented vision system.

Anyway, I’ve gone on too long here. Go check out the article and see what you think. Despite my reservations about their approach, the science is amazing and there is a lot of promise. I’m also proud to say that it’s all going on at (or at least, this article focuses on) the University of Washington, where I (briefly) had the privilege of studying Neuroanatomy. Between this and the HITLab, they’ve got some academic dynamite going on.