Honda today announced a “brain machine interface” (press release in English), which makes it possible for human beings to control robots by thought and does away with all the button pressing and joystick holding that usually gets on our nerves when we control our robots. The technology was developed jointly with Japanese tech company Shimadzu and Tokyo-based research institute ATR.

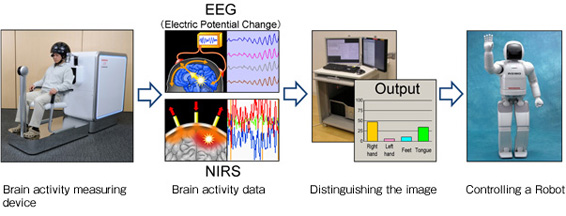

The interface is mainly based on two sensors using electroencephalography (better known as EEG), measuring electrical activity along the scalp, and near-infrared spectroscopy (NIRS) to measure changes in cerebral blood flow. All that the user needs to do is to wear a helmet that’s equipped with these sensors and imagine which of four pre-determined robot parts (left hand, right hand, tongue or feet – yes, tongue) he or she wants to see moving.

Honda says that in internal tests, its Asimo humanoid responded correctly to human thoughts in more than 90% of all cases, for example by moving its foot. The company has been working on brain machine interface applications for four years now and tries to find ways to use the technology in other Honda products in the future, meaning all of this is not completely gimmicky.

Via Robot Watch [JP]