Well, it happened. Google’s voice recognition mobile app finally arrived today on the iPhone App Store. Until today all we had to go by was the demo video that Google created showing it in action.

And that video shows something that quite simply changes the way I’d use the phone. Instead of clicking buttons on the virtual keyboard to search the web or my contacts, I’d just hit a button and use the Google Mobile App. And it really is just one button – it knows, via the accelerometer, when you put the phone to your ear and when you take it away. Voila! Cool stuff happens.

Here’s the video, narrated by Mike LeBeau on the Google Mobile team:

Let’s compare that video to my actual results. First, the big letdown is that you can’t search contacts by voice – you have to type for that, and it’s not really worth using the app just to do that when the normal contact application works just as well.

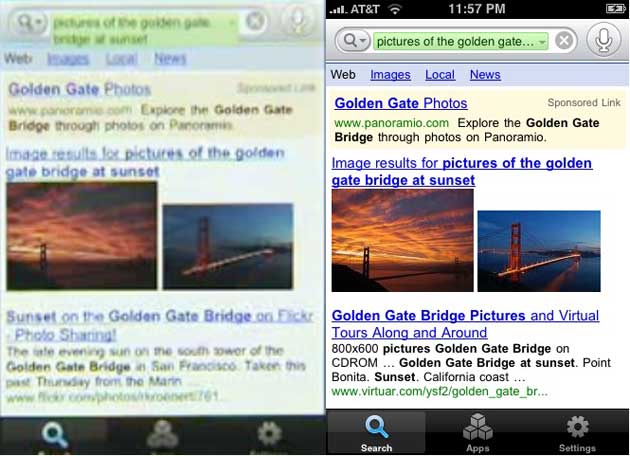

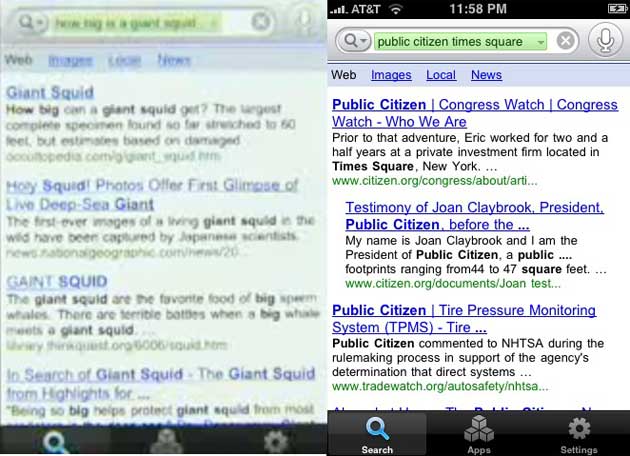

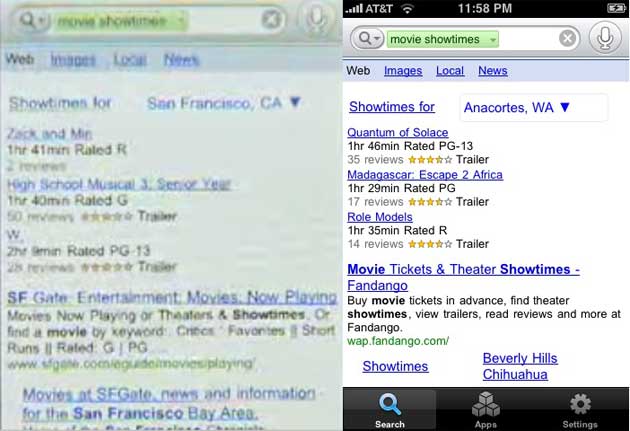

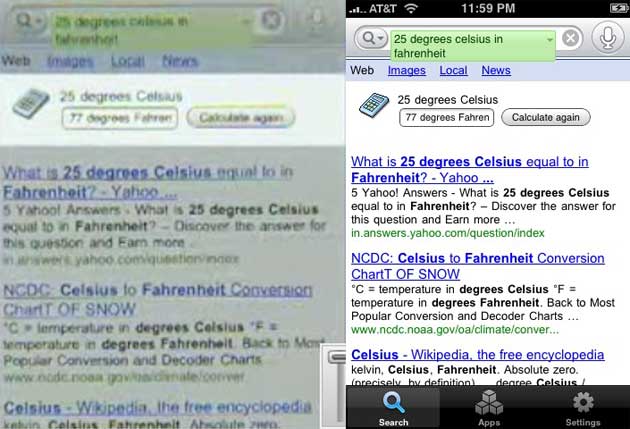

Also, it’s important that there is very little background noise when you use the app. A steady hum from an electric heater six feet away from me confounded the app on speakerphone. The noise from a car, certainly, will prohibit speakerphone usage while driving. The results below were done in a silent room with the phone held up to my ear, and I spoke as clearly as I am able. The demo results are shown on the left, my actual results are on the right.

First query: Pictures of the Golden Gate bridge at sunset: Results were perfect.

Second Query: How big is a giant squid?: Crazy results – I got “public citizen times square”

Third Query: Movie Showtimes: Results were perfect, and it used my location

25 degrees Celsius in Fahrenheit: Results were perfect

The contact search also went exactly as the video showed, but it’s a little misleading. You can’t search contacts by voice, only by typing. The video shows that, but by that point you’re all hopped up on voice goodness and you don’t really realize that its all typing at that point, which is little better than using the normal contact app that comes with the phone.

Overall, other than the one snafu with the giant squid, everything went well. But the voice recognition is far from perfect, as the demo video suggests. And the limitation on contact search is a letdown.