Image search engine Gazopa is the third TechCrunch50 company from Japan (following Opentrace and Sekai Camera).

Michael Arrington introduced the Hitachi-backed service with a warning to judge Bradley Horowitz from Google, saying: “Brad, you’re gonna hate the next company, seriously” (a tongue-in-cheek statement since Horowitz got his PhD in graphics and image processing).

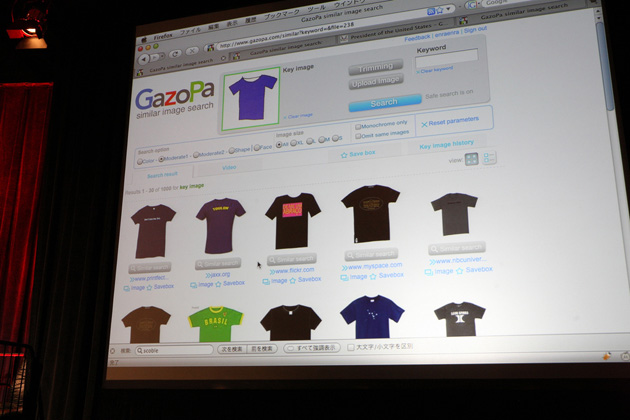

Gazopa uses proprietary image analytics technology to extract information such as color and shape from images. It then identifies similar pictures from a pool of about 50 million different images found around the web.

Gazopa’s big idea is to render keywords obsolete when it comes to image search. Instead of typing search terms, Gazopa’s visual engine lets users upload their own images or right-click on ones found on any webpage. Project leader Hideki Kobayashi used a handbag on a shopping site as an example. In case you like the general shape or color of the handbag but want to see others with similar designs, Gazopa matches the pattern without relying on any of the metadata associated with the images.

The concept is transferable to moving pictures. A thumbnail of a video is enough to have Gazopa find similar videos on the web. You can also create a sketch of a certain product in a Flash-based “drawing box”, after which Gazopa identifies what you drew and automatically retrieves matching images.

The presentation was more serious in tone than the previous ones given by the TC50 companies from Japan. Brad Horowitz said Gazopa’s idea was executed 15 years ago and viewed the search engine as just a potential augmentation to existing web sites, such as shopping services. Asked by Michael Arrington whether Gazopa thinks their technology is 15 years old, Kobayashi said AltaVista started offering a similar service 10 years ago but abandoned it soon after. According to him, Gazopa has a better chance of success because of its large database and the ubiquity of digital and phone cameras today. However, the panelists brought up the question of whether Gazopa was just a feature or a full-fledged product upon which a real business could be built.

Gazopa launched in beta right after the presentation.