Remember the “megapixel myth” that has driven camera specs for the last decade or so? Yeah, it’s still here; it’s called the “HD hoax” now. I just made that up. But seriously. The idea behind the megapixel myth was that simply increasing the size of the output image didn’t usually result in a better picture in any way. In fact, in addition to filling up the memory card faster, this megapixel bloat led to images that were noticeably less sharp and true to life. Similarly, so-called HD cameras and sensors are now being sold strictly on numbers and not on features or performance. But more data for the image is always better, right? Not quite.

What set this post off was that yesterday, Omnivision announced that they were packing 1080p onto a 1/6″ sensor. An admirable feat of miniaturization. But the reality is that this “high definition” is anything but.

First, a quick crash course in digital imaging. Forgive me if I gloss over or miss some of the more technical particulars.

1. Light approaches and hits the “event horizon” of the lens.

It’s not actually called the event horizon, but it’s the very outside of the lens, and the shape and placement of this determines the focal length of the lens — wide angle, portrait, telephoto, and so on. More bulbous, extruded lenses capture more light from a larger field of view. Lenses with physically larger surface areas collect more light, which is why lenses with low F numbers often have large front elements.

2. The light passes through a number of lens elements in order to be straightened and resized.

Light comes into a lens from a number of directions. Wider lenses can have light coming in from vastly different angles, and even telephotos have to deal with light hitting the lens at the “wrong” angle and creating flare and glow. Once the “right” light enters the lens assembly, it passes through a number of optic elements (discs of high-quality glass with various types of convexity and concavity), bending and re-bending the light to produce a projected image on the other side of the lens. Depending on the aperture selected, it will have a certain amount of the scene in focus, but the total light that comes out the interior end of the lens depends on the amount of light that came in the exterior end.

3. The light hits the sensor.

3. The light hits the sensor.

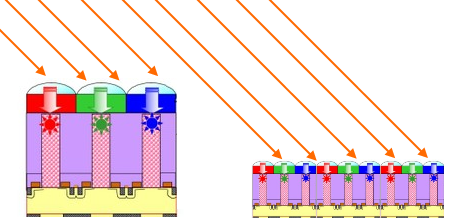

Where there was originally film, there is now a sensor, usually a CMOS in most cameras, though a few still use CCDs. Imagine a vast surface of little buckets made to collect light. The image seen by the lens on the outside is projected onto these, with a small amount of error introduced by flaws in the lens elements (there can be dozens in zoom lenses) or arrangement thereof. This creates things like chromatic aberration, vignetting, and softness at some apertures. The light is meant to be going as straight as possible into these buckets; a bit of light from a red dress must not end up in a bucket which is holding the light from the green grass beside the dress. If there must be overlap, it must be as small as possible — this produces sharpness and contrast. The distance between the buckets and the size of the buckets determines (to an extent) sensitivity and pixel pitch. And the buckets must be able to hold a lot or a little of light and dump it out accurately later; this produces correct exposure and dynamic range.

4. The sensor dumps the data.

Someone has to empty the buckets. The graphics processor or CPU does this by unloading them from the top to the bottom. Looking down on the sensor with the image upright on it, the top left bucket gets emptied first, then the next one to the right and so on, until the CPU reaches the end, at which point it starts over at the left on the next row. It does this as fast as it can, but sometimes it’s not fast enough. While it’s unloading the buckets, it’s often the case that they’ve started filling up again. This is called a rolling shutter, and while it allows for wiggle room with frame rates and shutter speeds, it can also be a major problem: rolling shutters create (among other things) something called skew, which distorts vertical lines and features. The faster the data is pulled off the sensor, the less skew there is and the more accurate the output image is.

5. The data is processed.

Now the raw data must be encoded into a form that’s compact and readable by devices and programs designed to “play” that content. Pictures are often saved to JPEGs. Video these days is often saved to codecs such as H.264 or AVCHD. The quality of encoding depends largely on the amount of processing power available or allocated. If you are encoding a movie on your computer and set quality to “draft,” it will search for edges only on a gross scale, only sample color every X pixels instead of Y pixels, and so on. You understand: shortcuts are taken. You trade quality for speed, as in other things. Dedicated processors for this are a big help, as they utilize parallel processing to accelerate the job, and can do more in a second or cycle than an ordinary CPU. The finished file is then stored on whatever medium is available.

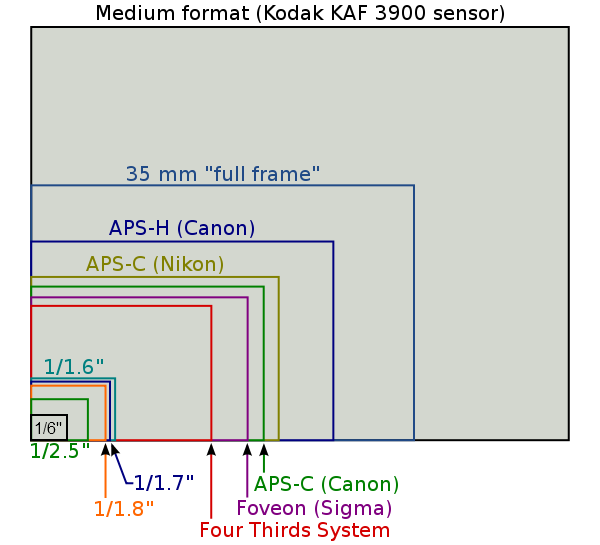

Sorry, that ended up being longer than I expected it to be. But now, if you didn’t before, you know the rudiments of the process. Now, let’s get on with the news. Omnivision, an established creator of image sensors, has created a sensor that is 1/6th of an inch diagonally that records 1080p video. To give you an idea of how large a 1/6″ sensor is, here is a very handy little chart.

My Rebel XSi, the T2i I just reviewed, and many other DSLRs fall under the 1.5 and 1.6x crop factor (APS-C) squares. The image above is not to scale; APS-C, for reference, is about the size of a postage stamp. The new Micro four thirds are 1/1.3″, and a large majority of compact digital cameras and camcorders I’ve seen and reported use a 1/2.3″ sensor, a bit smaller than the 1/2″ one. The rugged cameras I reviewed recently, for example, all had 1/2.3″ sensors or thereabouts. I’ve added the 1/6″ in there (had to kind of eyeball it), and in reality is about as big as as the first letter of this paragraph.

Now, every camera that I’ve shot with, including the impressive T2i, has problems with HD. Somewhere along the line, in one of those steps I mentioned above, something goes wrong. And with imaging, it only takes one weak link to create a bad photo or video. High definition shouldn’t just be a name for a resolution. It should mean that the level of definition in the image is high.

The pocket cams out there, for instance, can barely ape “HD.” Under the correct circumstances, in good lighting and with no motion, you would look at the 720p image and think “yes, that’s high definition.” For the most part, though, motion is blurry, colors are mixed, edges are indistinct, and there’s a weird sort of texture over the whole frame. What the hell? You paid good money for “full HD” (as the pocket cams are now advertising: 1080p in a phone-sized package). Why aren’t you getting images like the ones you see on TV?

The reason is that although the technology in one area or another may have advanced (lately it’s been sensors), the other bits of the camera are torpedoing the image quality all day long. Let’s go through the problems that occur during the process described above, in a $200 camcorder or phone shooting at 1080p.

1. and 2. The lens of the camera is garbage to begin with.

Think about it: devices which need tiny sensors are almost guaranteed to have terrible lenses. First, they’re tiny. You’re not getting a lot of light in one end, which means you don’t get a lot out the other end. Second, they’re cheap. The elements even in the nicest autofocus phone cameras are extremely small and (I’m guessing) are ground down from pieces too flawed to be used for large elements. Even perfectly good medium-sized digital cameras get tons of fringing and CA. Third, you’re losing a lot of detail through the plastic lens protectors and whatever oil and grime is on there. You can often tell a good camera by how well it picks up the flaws in the lens assembly, but in this case even microscopic flaws can affect the whole image.

3. Not only is the sensor small, but the “buckets” are small

Remember how we imagined a bunch of buckets next to each other? Now imagine those buckets are thimbles, and are expected to do the same job as buckets. This can only be done by using a boosted ISO to guesstimate from the thimble data how much light would have hit the buckets, but that creates a huge amount of error and noise. Not only that, but now that the buckets are thimbles, tiny things packed close together (high pixel pitch) there is even less tolerance for optic error! The tiny amount of error present even in a perfectly good lens is multiplied many times because the targets are so small — think what the shabby optics of a $3 lens assembly will do to the light. Now the red dress and the green grass are overlapping by a huge amount, resulting in a huge drop in sharpness and contrast. Even within stretches of a single color, the “sample size” for determining what color a pixel should be is totally distorted by boosted sensitivity, and color accuracy suffers as a result. These things can be minimized by predicting them and intelligently correcting for them, but only to a certain extent.

4. and 5. The sensor is slow and the CPU is slow

Granted, this issue is something that will only improve; RED One cameras, plagued by slow sensor offloading speeds, worked hard to produce firmware that fixed this, and now rolling shutter artifacts (while still present) are much reduced. Also, hardware encoding chips are getting cheaper and smaller, and will probably be featured in most video-shooting devices within a year or so. But now and for the next generation of imaging devices, you’re looking at a lot of skew (vertical and horizontal). And on the encoding front: 1080p video from the T2i, at 30 frames per second, was about 340MB. It’s a lot of space and takes a fair amount of data bandwidth to handle. It’s pretty much guaranteed that these smaller devices, Snapdragon processor or not, are going to be throttling the data rate in order to make sure there are no missed frames, errors, or that sort of thing. This hurried processing results in a muddy look to the video, however high resolution it may be, because edges and details have been rubbed out by a single hasty encoding pass.

Now, I’m not trying to break Omnivision’s balls here. Creating such a tiny sensor that is capable of producing such a high-res image however many times a second is a serious achievement. Mission accomplished. The thing is, unfortunately, said sensor doesn’t really enable devices to do anything different. You’re just going to magnify the problems that are already there, and fill up your SD card faster to boot. Will it take pictures and video? Sure. High resolution pictures and high definition video… of a low-quality image. It’s a bit like taking a picture of another picture, and expecting the second picture to be better than the first.

So if it’s not really high definition, why is it being recorded and stored in high definition? So they have a big number to sell you, of course, like 240Hz and 18 megapixels. My favorite is when camera makers refer to dot counts for LCDs, knowing that the average consumer has no idea how LCDs work. 230,000 dots? Sounds like a lot! Except it actually means 320×240 pixels — a resolution that’s been kicking about since the 80s.

How can you avoid this? Well, just like the megapixel race, you really can’t. Video recording devices are simply going to overdo it the way still cameras overdid it, and now we all have hundreds or thousands of dubious images which despite being 10 or 15 megapixels, if you look closely or print too big, have all kinds of weird artifacts in them. It’ll be the same for video. You can choose to record at a lower resolution; 720p (often even VGA/640×480) is just fine, after all, and may even record at the same bitrate, meaning better image quality. And actually look at the lenses on the cameras you buy. Lenses that are bigger across are (generally speaking) better, and every lens has its F numbers printed on it or on its spec sheet. If you’re trying to decide between a few cameras, look at their lenses: if one device maker is shirking on the lens, arguably the most important part of the camera, then you can be sure they shirked elsewhere too. Lastly, don’t buy anything that shoots in 1080i. Interlacing is a monster deserving of its own post.

I’d like to say that my issue with inflated video resolutions (and megapixels) is something that will be alleviated by the passage of time, like some of Ebert’s objections to 3D. But the cost of good optics isn’t really coming down, and really, the size of the lens is a physical barrier not likely to be surmounted any time soon. The methods we have for collecting and measuring light aren’t sufficient, and the improvements yet to be made for them will do nothing to help the fact that with bad components, it’s garbage in, garbage out.